Site Reliability Engineering (SRE)

Diagrams

Site Reliability Engineering is a discipline that applies software engineering principles to infrastructure and operations problems. Originated at Google, SRE treats operations as a software problem, building systems and tooling to manage large-scale, high-reliability production environments. The core thesis is that reliability is the most fundamental feature of any system -- if users cannot reach your service, nothing else matters.

Concepts

Service Level Indicators (SLIs)

An SLI is a quantitative measure of some aspect of the level of service being provided. SLIs are the raw metrics that describe how your system behaves from the user's perspective. Common SLIs include:

- Availability: The proportion of requests that succeed (e.g., HTTP 2xx responses divided by total requests).

- Latency: The distribution of response times for requests (e.g., p50, p95, p99).

- Throughput: The rate at which requests are processed successfully.

- Error rate: The proportion of requests resulting in errors.

- Durability: The likelihood that data, once written, can be read back (critical for storage systems).

Good SLIs are chosen based on what users actually care about. An internal batch processing system might prioritize throughput, while a consumer-facing API prioritizes latency and availability.

Service Level Objectives (SLOs)

An SLO is a target value or range for an SLI. It is an internal agreement on how reliable a service should be. For example:

- "99.9% of HTTP requests will return successfully within 200ms over a rolling 30-day window."

- "99.95% availability measured monthly."

SLOs are not aspirational -- they are precise engineering targets that drive operational decisions. Setting SLOs too high wastes engineering effort on diminishing returns. Setting them too low erodes user trust. The right SLO balances user expectations, business needs, and engineering cost.

The following example shows how to track multiple SLOs for a service, record request outcomes, and determine whether each objective is currently being met:

STRUCTURE SloDefinition

name : String

target : Float // e.g., 0.999 for 99.9%

window : Duration // rolling measurement window

STRUCTURE RequestOutcome

timestamp : Timestamp

success : Boolean

latency : Duration

/// Tracks multiple SLOs for a service and computes real-time compliance.

STRUCTURE SloTracker

definitions : List<SloDefinition>

outcomes : List<RequestOutcome>

STRUCTURE SloStatus

name : String

target, actual : Float

compliant : Boolean

error_budget_remaining : Float // fraction of budget left (0.0 to 1.0)

total_in_window, failures_in_window : Integer

PROCEDURE SloTracker.RECORD(success, latency)

APPEND RequestOutcome { timestamp ← NOW(), success, latency } TO self.outcomes

/// Evaluate all SLOs against the current window of request data.

PROCEDURE SloTracker.EVALUATE(now) → List<SloStatus>

results ← EMPTY LIST

FOR EACH slo IN self.definitions DO

cutoff ← now - slo.window

windowed ← FILTER self.outcomes WHERE timestamp ≥ cutoff

total ← LENGTH(windowed)

failures ← COUNT windowed WHERE success = FALSE

actual ← IF total = 0 THEN 1.0 ELSE 1.0 - (failures / total)

budget_fraction ← 1.0 - slo.target

allowed_failures ← FLOOR(total * budget_fraction)

budget_remaining ← IF allowed_failures = 0 THEN

(IF failures = 0 THEN 1.0 ELSE 0.0)

ELSE

1.0 - (failures / allowed_failures)

APPEND SloStatus {

name ← slo.name, target ← slo.target, actual ← actual,

compliant ← (actual ≥ slo.target),

error_budget_remaining ← MAX(budget_remaining, 0.0),

total_in_window ← total, failures_in_window ← failures

} TO results

END FOR

RETURN results

PROCEDURE MAIN()

tracker ← NEW SloTracker([

SloDefinition { name ← "availability", target ← 0.999, window ← 30 days },

SloDefinition { name ← "latency-p99-under-200ms", target ← 0.99, window ← 7 days }

])

FOR EACH status IN tracker.EVALUATE(NOW()) DO

PRINT "SLO '" + status.name + "': actual=" + (status.actual * 100) + "%"

+ ", target=" + (status.target * 100) + "%"

+ ", compliant=" + status.compliant

+ ", budget_remaining=" + (status.error_budget_remaining * 100) + "%"

END FOR

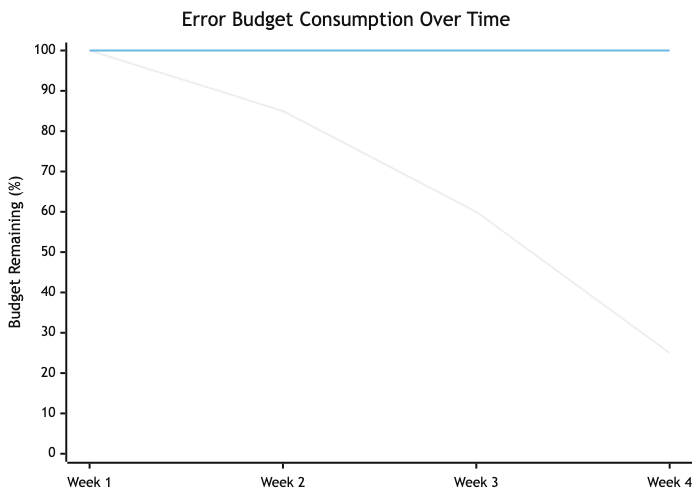

Error Budgets

The error budget is the inverse of an SLO. If your SLO is 99.9% availability, your error budget is 0.1% -- roughly 43 minutes of downtime per month. The error budget is a powerful concept because it reframes reliability as a resource to be spent, not a constraint to be maximized.

When the error budget is healthy, teams can push risky deployments, run experiments, and take on technical debt. When the budget is exhausted, the team shifts focus to reliability work. This creates an objective, data-driven mechanism for balancing feature velocity against stability.

/// Represents an error budget tracker for a service.

STRUCTURE ErrorBudget

slo_target : Float // e.g., 0.999 for 99.9%

window_seconds : Integer // e.g., 2592000 for 30 days

total_requests : Integer ← 0

failed_requests : Integer ← 0

PROCEDURE ErrorBudget.ALLOWED_FAILURES() → Integer

budget_fraction ← 1.0 - self.slo_target

RETURN FLOOR(self.total_requests * budget_fraction)

/// Returns the remaining error budget as a fraction (0.0 to 1.0).

/// Values below 0.0 indicate budget exhaustion.

PROCEDURE ErrorBudget.REMAINING_FRACTION() → Float

allowed ← self.ALLOWED_FAILURES()

IF allowed = 0 THEN

RETURN IF self.failed_requests = 0 THEN 1.0 ELSE -1.0

END IF

RETURN 1.0 - (self.failed_requests / allowed)

PROCEDURE ErrorBudget.RECORD(success)

self.total_requests ← self.total_requests + 1

IF NOT success THEN

self.failed_requests ← self.failed_requests + 1

END IF

/// Returns true if the team should freeze deployments and focus on reliability.

PROCEDURE ErrorBudget.SHOULD_FREEZE_DEPLOYMENTS() → Boolean

RETURN self.REMAINING_FRACTION() < 0.0

PROCEDURE MAIN()

budget ← NEW ErrorBudget(slo_target ← 0.999, window_seconds ← 30 * 24 * 3600)

// Simulate 1,000,000 requests with 1,200 failures

budget.total_requests ← 1000000

budget.failed_requests ← 1200

PRINT "Allowed failures: " + budget.ALLOWED_FAILURES()

PRINT "Remaining budget: " + (budget.REMAINING_FRACTION() * 100) + "%"

PRINT "Freeze deployments: " + budget.SHOULD_FREEZE_DEPLOYMENTS()

// Output:

// Allowed failures: 1000

// Remaining budget: -20.00%

// Freeze deployments: true

Capacity Planning

Capacity planning is the practice of determining the production resources required to meet future demand. It involves:

- Demand forecasting: Using historical data and growth projections to predict future load.

- Load testing: Validating that systems can handle projected load with acceptable SLI values.

- Provisioning lead time: Accounting for the time required to acquire and deploy additional capacity.

- Headroom: Maintaining spare capacity for unexpected traffic spikes and graceful degradation.

Effective capacity planning prevents both over-provisioning (wasted cost) and under-provisioning (outages during load spikes). SRE teams typically target N+1 or N+2 redundancy for critical services, meaning the system can lose one or two components and continue serving traffic within SLO.

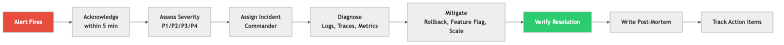

Incident Response and Management

Incident response is a structured process for detecting, mitigating, and resolving production issues. A mature incident response framework includes:

- Detection: Automated alerting based on SLI violations and anomaly detection.

- Triage: Quickly determining severity and impact. Common severity levels range from SEV-1 (critical, user-facing impact) to SEV-4 (minor, no user impact).

- Communication: Establishing an incident commander, a communication lead, and clear channels for coordination.

- Mitigation: Prioritizing stopping the bleeding over finding root cause. Roll back, redirect traffic, scale up -- whatever restores service fastest.

- Resolution: Fixing the underlying problem after the immediate impact is mitigated.

ENUMERATION Severity

Sev1 // Critical: major user-facing impact, data loss risk

Sev2 // High: significant degradation, partial outage

Sev3 // Medium: minor user impact, workaround available

Sev4 // Low: no user impact, internal tooling issue

ENUMERATION IncidentState

Detected, Triaged, Mitigating, Mitigated,

Resolved, PostMortemScheduled, Closed

STRUCTURE Incident

id, title : String

severity : Severity

state : IncidentState

detected_at : Timestamp

mitigated_at : Optional<Timestamp>

resolved_at : Optional<Timestamp>

incident_commander : String

affected_services : List<String>

timeline : List<TimelineEntry>

STRUCTURE TimelineEntry

timestamp : Timestamp

author, message : String

PROCEDURE Incident.TIME_TO_MITIGATE() → Optional<Duration>

IF self.mitigated_at IS NOT NULL THEN

RETURN self.mitigated_at - self.detected_at

END IF

RETURN NULL

PROCEDURE Incident.TIME_TO_RESOLVE() → Optional<Duration>

IF self.resolved_at IS NOT NULL THEN

RETURN self.resolved_at - self.detected_at

END IF

RETURN NULL

PROCEDURE Incident.REQUIRES_POSTMORTEM() → Boolean

RETURN self.severity IN {Sev1, Sev2}

A structured incident logging system goes beyond modeling the incident itself -- it captures a machine-readable audit trail of every action taken during response, enabling analysis of response patterns across incidents:

ENUMERATION LogLevel

Info, Warning, Critical

ENUMERATION IncidentAction

Detected { source : String, alert_name : String }

SeverityAssigned(String)

CommanderAssigned(String)

CommunicationSent { channel : String, message : String }

MitigationAttempted { action : String, successful : Boolean }

Escalated { from : String, to : String, reason : String }

ServiceImpact { service : String, impact_pct : Float }

Resolved { root_cause : String }

PostMortemLink(String)

STRUCTURE IncidentLogEntry

timestamp : Timestamp

level : LogLevel

action : IncidentAction

author : String

/// A structured log that records every action during an incident for later analysis.

STRUCTURE IncidentLog

incident_id : String

entries : List<IncidentLogEntry>

PROCEDURE IncidentLog.APPEND(level, action, author)

APPEND IncidentLogEntry { timestamp ← NOW(), level, action, author } TO self.entries

/// Calculate time from detection to first mitigation attempt.

PROCEDURE IncidentLog.TIME_TO_FIRST_MITIGATION() → Optional<Duration>

detected ← FIRST entry IN self.entries WHERE action IS Detected

first_mitigation ← FIRST entry IN self.entries WHERE action IS MitigationAttempted

IF detected AND first_mitigation BOTH FOUND THEN

RETURN first_mitigation.timestamp - detected.timestamp

END IF

RETURN NULL

/// Count how many mitigation attempts were made before success.

PROCEDURE IncidentLog.MITIGATION_ATTEMPTS() → (Integer, Integer)

total ← 0; successful ← 0

FOR EACH entry IN self.entries DO

IF entry.action IS MitigationAttempted THEN

total ← total + 1

IF entry.action.successful THEN successful ← successful + 1

END IF

END FOR

RETURN (total, successful)

/// List all affected services and their impact percentages.

PROCEDURE IncidentLog.AFFECTED_SERVICES() → List<(String, Float)>

RETURN [(e.action.service, e.action.impact_pct)

FOR EACH e IN self.entries WHERE e.action IS ServiceImpact]

PROCEDURE IncidentLog.TO_STRING() → String

output ← "=== Incident " + self.incident_id + " ==="

FOR EACH entry IN self.entries DO

output ← output + "[" + entry.level + "] (" + entry.author + ") "

+ entry.action + " -- " + entry.timestamp

END FOR

(total, successful) ← self.MITIGATION_ATTEMPTS()

output ← output + "Mitigation attempts: " + total + " total, " + successful + " successful"

RETURN output

On-Call Practices

On-call is the practice of having engineers available to respond to production issues outside normal working hours. Healthy on-call practices include:

- Rotation schedules: Typically weekly rotations with primary and secondary on-call engineers. Rotations should be shared across the team to distribute load fairly.

- Escalation policies: Clear paths for escalation when the on-call engineer cannot resolve an issue alone.

- Alert quality: Alerts should be actionable, not noisy. Every page should require human intervention. If an alert fires and the response is "ignore it," that alert must be fixed or removed.

- Compensation: On-call work is real work. Engineers should receive compensation or time off for on-call shifts.

- Sustainable load: Google's guideline is that on-call engineers should receive no more than two events per shift on average. Exceeding this leads to burnout and decreased response quality.

Post-Mortems and Blameless Culture

A post-mortem (also called a retrospective or incident review) is a written record of an incident: what happened, why, what the impact was, and what actions will prevent recurrence. The most critical attribute of effective post-mortems is blamelessness.

Blameless does not mean accountability-free. It means focusing on systemic failures rather than individual mistakes. Humans make errors -- the question is why the system allowed that error to cause an outage. A blameless post-mortem asks:

- What conditions allowed this failure to propagate?

- What monitoring gaps delayed detection?

- What safeguards could have prevented or limited the blast radius?

- What process changes would make the system more resilient?

Action items from post-mortems should be tracked to completion. A post-mortem that produces action items no one follows up on is worse than no post-mortem at all, because it creates an illusion of improvement.

Toil Reduction

Toil is manual, repetitive, automatable operational work that scales linearly with service growth and produces no enduring value. Examples include:

- Manually restarting failed processes.

- Running database migrations by hand.

- Manually provisioning user accounts.

- Copy-pasting configuration across environments.

Google's SRE book recommends that SRE teams spend no more than 50% of their time on toil. The remaining time should be spent on engineering work that permanently reduces future toil. If toil exceeds this threshold, the team cannot invest in automation and the problem compounds.

Reliability as a Feature

Reliability is not an afterthought or an ops concern -- it is a product feature. Users do not distinguish between "the feature is broken" and "the feature is unavailable." Both result in the same experience: the product does not work.

This means reliability work competes with feature work for engineering resources, and the error budget provides the mechanism for making that trade-off explicit and data-driven. Product managers and engineers negotiate SLOs together, and the error budget determines when reliability takes priority over new features.

A health check aggregator is a concrete example of reliability as a feature -- it gives operators and load balancers a single endpoint that reports whether the service and all its dependencies are functioning correctly:

ENUMERATION HealthStatus

Healthy

Degraded(reason : String)

Unhealthy(reason : String)

STRUCTURE DependencyHealth

name : String

status : HealthStatus

latency : Duration

last_checked : Timestamp

/// Interface for checking a dependency's health.

INTERFACE HealthCheckable

PROCEDURE NAME() → String

PROCEDURE CHECK() → DependencyHealth

/// Database health checker -- in production: attempt a lightweight query like "SELECT 1"

STRUCTURE DatabaseCheck IMPLEMENTS HealthCheckable

connection_string : String

timeout : Duration

PROCEDURE CHECK() → DependencyHealth

start ← CURRENT_TIME()

// Execute lightweight query

latency ← ELAPSED(start)

IF latency > self.timeout THEN

status ← Unhealthy("query exceeded timeout")

ELSE IF latency > self.timeout / 2 THEN

status ← Degraded("query above warning threshold")

ELSE

status ← Healthy

END IF

RETURN DependencyHealth { name ← "database", status, latency, last_checked ← NOW() }

/// Cache health checker -- in production: send PING to Redis/Memcached

STRUCTURE CacheCheck IMPLEMENTS HealthCheckable

/// External API health checker -- in production: HTTP GET to health endpoint

STRUCTURE ExternalApiCheck IMPLEMENTS HealthCheckable

STRUCTURE AggregatedHealth

overall : HealthStatus

dependencies : List<DependencyHealth>

checked_at : Timestamp

/// Aggregates health checks across all dependencies into a single status.

STRUCTURE HealthAggregator

checks : List<HealthCheckable>

/// Run all health checks and compute the overall status.

/// Overall status follows the worst-case: if any dependency is unhealthy,

/// the service is unhealthy. If any is degraded, the service is degraded.

PROCEDURE HealthAggregator.CHECK_ALL() → AggregatedHealth

results ← [check.CHECK() FOR EACH check IN self.checks]

IF ANY result IN results WHERE result.status IS Unhealthy THEN

failed ← [r.name FOR EACH r IN results WHERE r.status IS Unhealthy]

overall ← Unhealthy("failing dependencies: " + JOIN(failed, ", "))

ELSE IF ANY result IN results WHERE result.status IS Degraded THEN

degraded ← [r.name FOR EACH r IN results WHERE r.status IS Degraded]

overall ← Degraded("degraded dependencies: " + JOIN(degraded, ", "))

ELSE

overall ← Healthy

END IF

RETURN AggregatedHealth { overall, dependencies ← results, checked_at ← NOW() }

/// Returns true only if every dependency is fully healthy.

PROCEDURE HealthAggregator.IS_READY() → Boolean

RETURN self.CHECK_ALL().overall = Healthy

PROCEDURE MAIN()

aggregator ← NEW HealthAggregator

aggregator.ADD_CHECK(DatabaseCheck { connection_string ← "postgres://localhost:5432/mydb", timeout ← 500ms })

aggregator.ADD_CHECK(CacheCheck { host ← "redis://localhost:6379", timeout ← 100ms })

aggregator.ADD_CHECK(ExternalApiCheck { url ← "https://api.example.com/health", timeout ← 2s })

health ← aggregator.CHECK_ALL()

PRINT health

PRINT "Service ready: " + aggregator.IS_READY()

Business Value

SRE provides measurable business value across several dimensions:

Revenue protection. Downtime directly costs money. For an e-commerce platform processing $10M per day, a 99.9% SLO allows roughly 86 seconds of downtime per day. Improving to 99.99% reduces that to 8.6 seconds. The business value of that improvement depends on whether the lost revenue during those 77 seconds justifies the engineering cost.

Engineering efficiency. By automating toil and building self-healing systems, SRE frees engineers to work on features rather than firefighting. Organizations without SRE practices often find that 70-80% of engineering time goes to unplanned operational work.

Faster iteration. Error budgets create a clear signal for when it is safe to deploy aggressively. Teams with healthy error budgets can ship faster because they have a quantified safety margin, rather than relying on gut feelings about risk.

Reduced burnout. Structured on-call, blameless post-mortems, and toil reduction directly improve engineer quality of life. This reduces turnover, which is one of the most expensive hidden costs in software organizations.

Customer trust. Consistent reliability builds trust. Users and enterprise customers make purchasing decisions based on reliability track records. SLAs (the external, contractual cousins of SLOs) backed by strong SRE practices become a competitive advantage.

Real-World Examples

Google: The Origin of SRE

Google created the SRE discipline in 2003 under Ben Treynor Sloss. Google's SRE teams manage some of the largest systems on the planet (Search, Gmail, YouTube, Cloud). Key practices that emerged from Google's experience:

- SRE teams have the authority to hand services back to development teams if operational load becomes unsustainable. This creates a forcing function for developers to build operable software.

- Error budgets are enforced. When a service exhausts its error budget, feature freezes are mandated until reliability improves.

- Google publishes quarterly SLO reports internally, creating transparency and accountability across the organization.

- SRE candidates are hired as software engineers first. Every SRE at Google can (and does) write production code.

Netflix: Chaos Engineering as SRE Practice

Netflix pioneered chaos engineering with Chaos Monkey (2011) and later the Simian Army. Their approach to reliability is distinctive:

- Chaos Monkey randomly terminates production instances to verify that services handle failure gracefully. This runs continuously in production, not just in staging.

- Chaos Kong simulates the failure of an entire AWS availability zone or region, validating that Netflix can serve traffic from remaining regions.

- Netflix maintains a "paved road" -- a set of standardized tools and libraries that bake in reliability patterns (circuit breakers, retry logic, bulkheads). Teams that stay on the paved road inherit reliability properties automatically.

- Their SRE equivalent teams focus heavily on developer tooling and platform capabilities rather than manual operational work.

Slack: SLOs Driving Product Decisions

Slack adopted SRE principles to manage their real-time messaging infrastructure, which has stringent latency and availability requirements:

- Slack defines SLOs at the user-journey level rather than the individual service level. The SLO for "user sends a message and it appears in the recipient's channel" spans multiple backend services.

- They implemented an internal error budget dashboard that product managers and engineering leads review weekly. When a team's error budget runs low, that team's sprint priorities shift toward reliability.

- After a major outage in 2022, Slack invested in a formalized incident management process with dedicated incident commanders drawn from a trained rotation across engineering.

Cloudflare: Incident Transparency and Post-Mortems

Cloudflare operates a global network serving millions of websites and has built a strong public post-mortem culture:

- Every significant incident results in a public blog post detailing what happened, with precise technical depth. Their June 2022 post-mortem about a BGP misconfiguration that took down 19 data centers is a model of transparent incident communication.

- Cloudflare uses canary deployments extensively -- changes roll out to a small percentage of traffic first, with automated rollback if SLI degradation is detected.

- Their SRE teams maintain per-data-center SLOs, which is unusual but necessary given their globally distributed architecture where regional failures do not necessarily constitute global outages.

Common Mistakes and Pitfalls

1. Setting SLOs at 100%

A 100% SLO is unachievable and counterproductive. No system can guarantee perfect availability -- hardware fails, networks partition, software has bugs. A 100% target means zero error budget, which means any deployment that could theoretically cause a failure is forbidden. This paralyzes development. The correct question is not "how do we achieve 100% uptime?" but "what level of reliability do our users actually need?"

2. Measuring SLIs That Do Not Reflect User Experience

Monitoring CPU utilization, disk I/O, and memory usage is necessary but insufficient. These are system metrics, not user-facing SLIs. A server can have 10% CPU utilization and still serve errors to every user if a downstream dependency is broken. SLIs must be defined from the user's perspective: did the request succeed, and how long did it take?

3. Treating SRE as Rebranded Operations

Hiring an operations team, renaming them "SRE," and changing nothing else misses the point entirely. SRE is a software engineering discipline. If your SRE team is not writing code to automate operational tasks, building tooling, and eliminating toil, you have an operations team with a new title. The 50% engineering time guideline exists precisely to prevent this.

4. Alert Fatigue from Noisy Monitoring

Teams that alert on every metric crossing a threshold quickly drown in notifications. When everything is urgent, nothing is urgent. Engineers start ignoring alerts, and real incidents get lost in the noise. Alerts should be tied to SLO violations and should be actionable -- every alert that fires should require a human to do something. Symptom-based alerting (the user is affected) is almost always superior to cause-based alerting (a specific metric is elevated).

5. Writing Post-Mortems but Not Following Up on Action Items

Many organizations adopt the practice of writing post-mortems but fail to track and complete the resulting action items. This is worse than not writing post-mortems at all because it creates the illusion of a learning culture while the same classes of failure repeat. Every action item should have an owner, a deadline, and a tracking mechanism. Leadership should review action item completion rates as a health metric.

6. Ignoring Toil Until It Consumes the Team

Toil grows gradually. Each individual manual task seems small and quick, so it never becomes a priority to automate. Over months and years, toil accumulates until the team spends the vast majority of its time on repetitive operational work and has no capacity for improvement. Track toil explicitly, measure it as a percentage of team time, and treat any sustained increase as a problem requiring engineering investment.

Trade-offs

| Decision | Advantage | Disadvantage | |---|---|---| | Higher SLO (e.g., 99.99%) | Greater user trust, fewer complaints, stronger SLA commitments | Exponentially higher engineering cost, slower feature velocity, smaller error budget constrains experimentation | | Lower SLO (e.g., 99.5%) | More room for experimentation, faster iteration, lower infrastructure cost | Users may experience noticeable unreliability, enterprise customers may demand contractual guarantees you cannot meet | | Dedicated SRE team | Deep operational expertise, clear ownership of reliability, career path for SRE engineers | Risk of siloing reliability knowledge, "throw it over the wall" dynamic with development teams | | Embedded SRE (within dev teams) | Developers own reliability end-to-end, faster feedback loops, no handoff friction | Reliability expertise is diluted, inconsistent practices across teams, SRE work may be deprioritized for features | | Aggressive automation | Reduced toil, faster incident response, consistent execution | High upfront investment, risk of automating incorrectly (automated systems can cause outages at machine speed), complex failure modes | | Manual runbooks | Low upfront cost, humans can exercise judgment in novel situations | Does not scale, prone to human error under stress, toil grows linearly with system size | | Strict error budget enforcement | Clear prioritization signal, objective mechanism for balancing reliability and velocity | Can feel punitive if the policy is not well-communicated, may block urgent feature work at inconvenient times | | Loose error budget guidelines | Flexibility to make case-by-case decisions, avoids rigid process overhead | Error budgets lose their power as a coordination mechanism, reliability may degrade as teams consistently prioritize features |

When to Use / When Not to Use

When SRE Practices Are Appropriate

- User-facing services with meaningful uptime requirements. If your users notice and are harmed by downtime, SRE practices help you manage reliability systematically.

- Systems at scale. Once manual operations cannot keep pace with system growth, engineering-driven approaches to operations become necessary.

- Organizations with multiple teams deploying independently. SLOs and error budgets provide a common language for discussing reliability across teams.

- Services with contractual SLAs. If you have committed to customers that your service will meet specific reliability targets, you need the operational discipline to deliver on those commitments.

- Environments where incidents are frequent or costly. Structured incident response, post-mortems, and toil reduction provide the largest return when operational pain is high.

When SRE Practices May Be Premature or Inappropriate

- Early-stage startups with a handful of engineers. If your entire engineering team is five people, formalizing SRE roles and processes adds overhead without proportional benefit. Everyone should understand production, but dedicated SRE structure is unnecessary.

- Internal tools with tolerant users. If the tool is used by a small internal team that can tolerate occasional downtime and has a direct communication channel with the developers, full SRE rigor is overkill.

- Batch processing or offline systems with flexible deadlines. If a nightly data pipeline can simply be rerun the next day, investing in 99.99% availability for that pipeline is a poor use of resources.

- Prototypes and experiments. Systems that may be discarded in weeks do not warrant SLO definitions and error budget tracking.

- Organizations without software engineering maturity. SRE assumes a foundation of version control, automated testing, CI/CD, and infrastructure as code. Without these prerequisites, SRE practices will not be effective because there is no engineering substrate to build on.

Key Takeaways

-

SRE treats operations as a software engineering problem. The defining characteristic of SRE is applying engineering discipline -- automation, measurement, systematic design -- to work that was traditionally handled through manual processes and tribal knowledge.

-

SLOs and error budgets create an objective framework for balancing reliability and velocity. Rather than arguing about whether a deployment is "too risky," teams can look at the error budget and make data-driven decisions. This depoliticizes one of the most common sources of friction between product and engineering teams.

-

Measure what matters to users, not what is easy to measure. SLIs must reflect the user experience. Server-side metrics are inputs to understanding user experience, but they are not the user experience itself. Instrument at the boundary closest to the user.

-

Toil is a debt that compounds. Every hour spent on manual, repetitive operational work is an hour not spent building automation that would eliminate that work permanently. Track toil explicitly and invest engineering time in reducing it before it overwhelms the team.

-

Blameless post-mortems are a prerequisite for organizational learning. If engineers fear punishment for being involved in incidents, they will hide information, avoid on-call, and resist transparency. A blameless culture is not about avoiding accountability -- it is about directing accountability toward systemic improvements rather than individual blame.

-

Reliability has diminishing returns, and the cost curve is exponential. Moving from 99% to 99.9% is an order of magnitude harder than moving from 90% to 99%. Every additional nine requires disproportionate investment. The right SLO is the one that meets user needs at a sustainable engineering cost.

-

SRE is not a team -- it is a set of practices. While dedicated SRE teams can be effective, the underlying principles (SLOs, error budgets, toil tracking, incident management, post-mortems) can and should be adopted by any team that operates production software, regardless of organizational structure.

Further Reading

Books

- "Site Reliability Engineering: How Google Runs Production Systems" by Betsy Beyer, Chris Jones, Jennifer Petoff, and Niall Richard Murphy (2016). The foundational text on SRE, freely available at sre.google/sre-book. Covers principles, practices, and case studies from Google's experience.

- "The Site Reliability Workbook" by Betsy Beyer, Niall Richard Murphy, David K. Rensin, Kent Kawahara, and Stephen Thorne (2018). A companion to the first book with practical, hands-on guidance for implementing SRE. Available at sre.google/workbook.

- "Implementing Service Level Objectives" by Alex Hidalgo (2020). A deep dive into the practice of defining, measuring, and operationalizing SLOs across an organization.

- "Chaos Engineering: System Resiliency in Practice" by Casey Rosenthal and Nora Jones (2020). Covers the theory and practice of deliberately introducing failures to improve system resilience.

- "Incident Management for Operations" by Rob Schnepp, Ron Vidal, and Chris Hawley (2017). Adapts incident command system principles from emergency services to technology operations.

Articles and Resources

- "SLOs Are the API of Your Reliability" -- Google Cloud Blog. Explains the relationship between SLOs, error budgets, and organizational decision-making.

- "Monitoring Distributed Systems" -- Chapter 6 of the Google SRE book. The definitive guide to symptom-based monitoring and alerting on SLO violations.

- "Postmortem Culture: Learning from Failure" -- Chapter 15 of the Google SRE book. Detailed guidance on writing effective, blameless post-mortems.

- Google's "Art of SLOs" workshop materials -- Available at sre.google. A structured workshop for teams defining SLOs for the first time.

- "Nines are Not Enough" by Narayan, Tighe, et al. (2020, USENIX HotOS). A research paper arguing that SLOs need richer models beyond simple availability percentages.

Tools

- Prometheus -- Open-source monitoring and alerting toolkit, widely used for SLI measurement and SLO-based alerting. Its query language (PromQL) is designed for the kind of ratio-based queries that SLOs require.

- Grafana -- Visualization platform commonly paired with Prometheus. Supports SLO dashboards with burn-rate alerting views.

- Sloth -- An open-source tool that generates Prometheus recording rules and alerts from SLO definitions, reducing the boilerplate of SLO-based monitoring.

- PagerDuty / Opsgenie -- Incident management platforms for on-call scheduling, alert routing, escalation policies, and incident tracking.

- Datadog SLO Monitoring -- Commercial monitoring platform with built-in SLO tracking, error budget dashboards, and burn-rate alerts.

- Chaos Mesh / Litmus -- Open-source chaos engineering platforms for Kubernetes that allow controlled fault injection to validate system resilience.

- Blameless / FireHydrant / incident.io -- Incident management platforms that provide structured workflows for incident response, communication, and post-mortem creation.