Advanced Time Complexity

Blum Complexity Measures

Blum's axiomatic framework abstracts computational complexity beyond specific machine models. A Blum complexity measure is a pair (phi, Phi) where phi is an acceptable numbering of partial recursive functions and Phi satisfies:

- Blum Axiom 1: dom(phi_i) = dom(Phi_i) for all i (the complexity is defined exactly when the computation halts).

- Blum Axiom 2: The predicate "Phi_i(x) = m" is decidable (we can verify a claimed complexity value).

Time and space on Turing machines are canonical examples, but the axioms capture any "reasonable" cost measure. All theorems below hold for arbitrary Blum measures unless stated otherwise.

The Time Hierarchy Theorem

Theorem (Hartmanis-Stearns, 1965): If f(n) is time-constructible and f(n) log f(n) = o(g(n)), then DTIME(f(n)) is strictly contained in DTIME(g(n)).

The proof uses diagonalization: construct machine M that on input x of length n simulates the x-th machine for f(n) steps and flips the output. M runs in O(f(n) log f(n)) time due to simulation overhead (the log factor comes from tape alphabet translation on multi-tape TMs). Any machine running in time f(n) is defeated on at least one input.

Nondeterministic hierarchy: NTIME(f(n)) is strictly contained in NTIME(g(n)) when f(n+1) = o(g(n)), proved via delayed diagonalization (Cook, 1972; Seiferas-Fischer-Meyer, 1978). The gap is tighter because complementing nondeterministic decisions is non-trivial.

Corollary: P is strictly contained in EXP. More precisely, DTIME(n^k) is strictly contained in DTIME(n^{k+1}) for every k.

The Speedup Theorem

Theorem (Blum, 1967): For any total computable function r(n), there exists a decidable language L such that for every machine M_i deciding L with complexity Phi_i, there exists another machine M_j deciding L with Phi_j(x) <= r(Phi_i(x)) for all but finitely many x.

This is deeply counterintuitive: L has no optimal algorithm under any Blum measure. Every program for L can be sped up by a factor of r. The proof constructs L by a priority argument, carefully assigning inputs to ensure every fast program can be superseded. This shows that intrinsic complexity cannot always be assigned to individual problems.

The Gap Theorem

Theorem (Borodin, 1972): For any total computable function r, there exists a total computable function t such that DTIME(t(n)) = DTIME(r(t(n))).

Despite the hierarchy theorem showing strict separations exist, the gap theorem says arbitrarily large complexity gaps can appear where no new languages are captured. The function t is constructed by finding regions where no machine's running time falls between t(n) and r(t(n)). The key insight: t need not be time-constructible, so this does not contradict the hierarchy theorem.

Padding Arguments

Padding is a fundamental technique for transferring complexity-theoretic relationships between complexity classes. The core idea: given a language L in DTIME(2^n), define L' = {x#1^{2^|x|} : x in L}. Then L' is in DTIME(n) (linear time relative to the padded input length).

Key applications:

- If EXP = NEXP, then P = NP (contrapositive: P != NP implies EXP != NEXP)

- If E = NE, then P = NP (similarly via padding)

- EXPSPACE-completeness reductions from PSPACE-complete problems

Padding formalizes the intuition that exponential-time classes are "scaled-up" versions of polynomial-time classes. It also demonstrates that certain separations at one level imply separations at others.

Diagonalization

Diagonalization is the primary technique for unconditional separations. The method:

- Enumerate all machines M_1, M_2, ...

- Construct language L such that L disagrees with L(M_i) on at least one input for each i in the target class

- Ensure L itself belongs to a slightly larger class

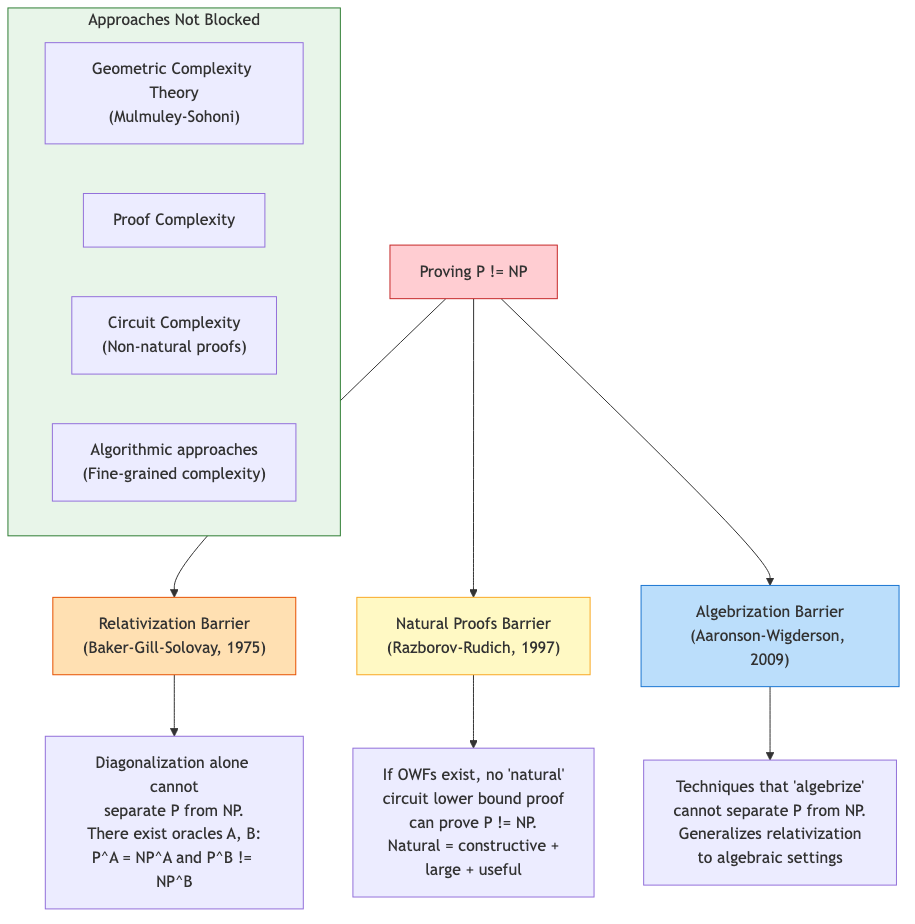

Limitations: Diagonalization alone cannot separate P from NP. This is formalized by the Baker-Gill-Solovay theorem (below). Relativizing arguments treat the oracle as a black box, and diagonalization inherently relativizes.

Oracle Separations and Relativization

An oracle Turing machine M^A has access to a set A, answering membership queries in one step.

Theorem (Baker-Gill-Solovay, 1975): There exist oracles A and B such that P^A = NP^A and P^B != NP^B.

- Oracle A: Take A = any PSPACE-complete language. Then P^A = NP^A = PSPACE (since PSPACE is closed under polynomial-time oracle access, and NP^A can be simulated in PSPACE).

- Oracle B: Constructed by diagonalization. Define B as a sparse unary language where B intersected with {0,1}^n is nonempty for specific n. An NP^B machine guesses the right string; no P^B machine can find it (the strings are hidden by the construction of B at each stage).

Implications: Any proof that P != NP (or P = NP) must use non-relativizing techniques. Since diagonalization and simulation (the two main tools pre-1990) both relativize, fundamentally new methods are required.

Algebrization

Aaronson and Wigderson (2009) introduced algebrization as a stronger barrier. A statement algebrizes if it holds relative to any algebraic extension of the oracle. IP = PSPACE uses arithmetization but does algebrize. Proving P != NP requires techniques that do not algebrize.

Natural Proofs

Razborov and Rudich (1997) showed that natural proofs (combinatorial circuit lower bound arguments satisfying constructivity and largeness) cannot prove superpolynomial circuit lower bounds if one-way functions exist. This creates a tension: the existence of cryptography (widely believed) implies that the most natural proof strategies for P != NP must fail.

The Nondeterministic Time Hierarchy

Theorem (SFM, 1978; Zak, 1983): If f, g are time-constructible and f(n+1) = o(g(n)), then NTIME(f(n)) is strictly contained in NTIME(g(n)).

The proof requires delayed diagonalization because the standard approach fails: to diagonalize against NTMs, we need to determine that a machine rejects, which requires complementation. The SFM technique stages the diagonalization across multiple inputs, using a translation argument.

Open problem: Is NTIME(n) strictly contained in NTIME(n^{1.01})? The (+1) in f(n+1) prevents tight separations.

Ladner's Theorem

Theorem (Ladner, 1975): If P != NP, there exist languages in NP that are neither in P nor NP-complete. These are called NP-intermediate languages.

The proof constructs a language L by padding SAT: L = {phi#1^{f(|phi|)} : phi in SAT} where f grows slowly enough that L is not NP-complete (reductions cannot generate enough padding) but fast enough that L is not in P. The construction uses delayed diagonalization against both polynomial-time machines and polynomial-time reductions from SAT.

Candidates for NP-intermediate: Graph isomorphism, factoring (as decision problems). Neither is known to be NP-complete, and both have sub-exponential algorithms suggesting they are not NP-complete.

Relativized World Summary

| Relationship | Oracle where equal | Oracle where unequal |

|---|---|---|

| P vs NP | PSPACE-complete oracle | Random oracle (with prob 1) |

| NP vs coNP | A = PSPACE-complete | Random oracle |

| P vs PSPACE | -- | Any oracle (unconditional containment is strict) |

| IP vs PSPACE | Standard (no oracle needed: IP=PSPACE) | -- |

Connections to Circuit Complexity

The hierarchy theorems have circuit analogues. The time hierarchy gives DTIME(n^k) != DTIME(n^{k+1}), but we cannot prove such separations for circuit size (doing so would separate P from P/poly, a major open problem). The inability to translate uniform separations to non-uniform ones reflects the power of advice/non-uniformity.

Open Problems

- Does NEXP have polynomial-size circuits? (Separating NEXP from P/poly would yield circuit lower bounds via the Nondeterministic Hierarchy.)

- Derandomization: Does BPP = P? The polynomial hierarchy relative to a random oracle collapses to BPP, suggesting derandomization may require non-relativizing techniques.

- The Minimum Circuit Size Problem (MCSP): Is it NP-complete? It is in NP but reductions to/from it are constrained by natural proof barriers.

Summary of Barriers

| Barrier | Prevents | Introduced by |

|---|---|---|

| Relativization | Techniques that treat oracle as black box | Baker-Gill-Solovay (1975) |

| Natural Proofs | Constructive, large circuit lower bounds (if OWFs exist) | Razborov-Rudich (1997) |

| Algebrization | Techniques using only low-degree extensions | Aaronson-Wigderson (2009) |

All three barriers must be circumvented simultaneously to resolve P vs NP. Known non-relativizing results (IP=PSPACE) and known non-naturalizing results (some circuit lower bounds via algebraic geometry) give partial progress, but no technique yet overcomes all three.