Advanced Approximation Algorithms

Overview

For NP-hard optimization problems, approximation algorithms provide solutions with provable guarantees on solution quality. The approximation ratio alpha measures how far the output can be from optimal: for minimization, cost(ALG) <= alpha * OPT.

LP Relaxation and Rounding

Framework

- Formulate the problem as an integer linear program (ILP)

- Relax integrality constraints to get a linear program (LP)

- Solve the LP in polynomial time

- Round the fractional solution to an integral one

Vertex Cover via LP Rounding

ILP for minimum vertex cover:

minimize sum(x_v for v in V)

subject to x_u + x_v >= 1 for each edge (u,v)

x_v in {0, 1}

LP relaxation: replace x_v in {0,1} with 0 <= x_v <= 1.

Rounding: set x_v = 1 if x*_v >= 1/2, else x_v = 0.

- Every edge constraint is satisfied (at least one endpoint >= 1/2)

- Cost of integral solution <= 2 * LP_OPT <= 2 * OPT

- 2-approximation for vertex cover

Randomized Rounding

Include vertex v in the solution with probability x*_v (the LP value).

- Expected cost = LP_OPT

- Each constraint satisfied with probability >= 3/4 for set cover variants

- Repeat O(log n) times and take the union for high probability feasibility

- Achieves O(log n)-approximation for set cover (matching hardness)

Integrality Gap

The ratio between the optimal integer solution and the optimal LP solution.

- Vertex cover LP has integrality gap exactly 2 (complete bipartite graph K_{n,n})

- Set cover LP has integrality gap Theta(log n)

- The integrality gap is a barrier: no rounding scheme can beat it

Primal-Dual Method

Simultaneously build a feasible primal solution and a feasible dual solution. Use complementary slackness conditions (possibly relaxed) to guide construction.

Primal-Dual for Vertex Cover

Dual LP: maximize sum(y_e for e in E) subject to sum(y_e : e incident to v) <= 1 for all v, y_e >= 0.

Algorithm:

- Initialize all y_e = 0

- While there exists an uncovered edge e = (u,v):

- Raise y_e until some endpoint's dual constraint becomes tight

- Add that vertex to the cover

- Return the cover

- Dual feasible by construction

- Each selected vertex v has sum of dual = 1 (tight constraint)

- OPT >= dual value, cover cost <= 2 * dual value

- 2-approximation, runs in nearly linear time (no LP solver needed)

Applications of Primal-Dual

- Steiner tree and Steiner forest (2-approximation)

- Facility location (3-approximation, improvable to 1.488)

- Feedback vertex set, network design problems

Semidefinite Programming (SDP)

SDP Relaxation

Generalize LP by optimizing over positive semidefinite matrices. Variables become vectors; constraints involve dot products.

maximize sum(w_ij * (1 - v_i . v_j) / 2)

subject to v_i . v_i = 1 for all i

v_i in R^n (unit vectors)

Solved in polynomial time via interior-point methods or ellipsoid method.

MAX-CUT: Goemans-Williamson Algorithm

- Solve the SDP relaxation (vectors on unit sphere)

- Choose a random hyperplane through the origin

- Partition vertices by which side of the hyperplane their vector lies on

Approximation ratio: alpha_GW = min (2/pi) * (1/(1 - cos(theta))) * theta = 0.878... (over all angles theta)

- Best known polynomial-time guarantee for MAX-CUT

- Assuming the Unique Games Conjecture, 0.878 is optimal

- Generalizes to MAX-2SAT (0.940 approximation)

SDP Rounding Techniques

- Random hyperplane (Goemans-Williamson)

- RPR^2 rounding for constraint satisfaction

- Threshold rounding for coloring problems

Local Search

Start from a feasible solution, repeatedly improve by local modifications.

k-Opt Local Search for TSP

- A solution is k-opt if no improvement possible by swapping k edges

- 2-opt: O(n^2) neighborhood, practical and fast convergence

- Lin-Kernighan: variable-depth search, best practical heuristic

Local Search for k-Median

- Open k facilities, assign each client to nearest facility

- Swap operation: close one facility, open another

- Single-swap local search: 5-approximation

- Multi-swap (p swaps): (3 + 2/p)-approximation

Facility Location

- Local search with open/close/swap operations

- 3-approximation via local search (matches LP-based results)

Submodular Function Maximization

A set function f: 2^V -> R is submodular if: f(A union {e}) - f(A) >= f(A union {e}) - f(B) whenever A subset B (diminishing returns).

Monotone Submodular Maximization with Cardinality Constraint

Greedy algorithm: repeatedly add the element with largest marginal gain.

- Achieves (1 - 1/e) ~ 0.632 approximation ratio

- This is optimal unless P = NP [Nemhauser-Wolsey-Fisher 1978]

- Applies to: maximum coverage, influence maximization, sensor placement

Non-Monotone Submodular Maximization

- Unconstrained: randomized local search gives 1/2 approximation

- Matroid constraint: continuous greedy + pipage rounding gives 1 - 1/e

Continuous Extensions

- Multilinear extension: F(x) = E[f(R(x))] where R(x) is random rounding

- Lovasz extension: connects submodularity to convexity

- Continuous greedy: follow gradient of multilinear extension on matroid polytope

PTAS Design Techniques

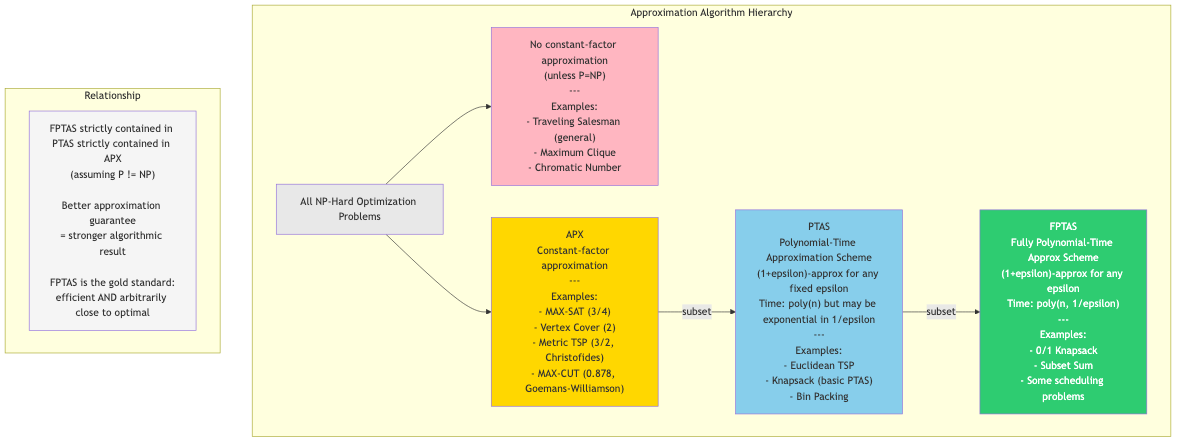

A Polynomial-Time Approximation Scheme (PTAS) gives (1+epsilon) approximation for any fixed epsilon > 0, with runtime polynomial in n (but possibly exponential in 1/epsilon).

Shifting/Partitioning (Baker's Technique)

- For planar graph problems (independent set, vertex cover, dominating set)

- Partition plane into strips of width 1/epsilon

- Solve optimally on each strip (bounded treewidth)

- Try all shifts, take the best

Dynamic Programming Based PTAS

- Knapsack PTAS: round profits, DP on rounded values

- Scale profits by epsilon * p_max / n, solve DP in O(n^2 / epsilon) time

- Euclidean TSP (Arora/Mitchell): quad-tree decomposition, DP on portals

EPTAS and FPTAS

- FPTAS: runtime polynomial in both n and 1/epsilon (e.g., Knapsack)

- EPTAS: runtime f(1/epsilon) * poly(n) — FPT in 1/epsilon

- No FPTAS for strongly NP-hard problems (unless P = NP)

Hardness of Approximation

| Problem | Best Ratio | Hardness Threshold |

|---|---|---|

| Vertex Cover | 2 | 2 - epsilon (UGC) |

| Set Cover | ln n | (1-epsilon) ln n |

| MAX-CUT | 0.878 | 0.878+ (UGC) |

| TSP (general) | Inapprox. | Any finite ratio |

| Metric TSP | 3/2 | 123/122 (unconditional) |

| Clique | n^(1-epsilon) | Unless P = NP |

| k-Median | 2.675 | 1 + 2/e |

Summary

Advanced approximation techniques form a rich toolkit. LP and SDP relaxations provide lower bounds and structure for rounding. Primal-dual methods yield combinatorial algorithms without solving LPs. Submodular optimization captures diminishing returns in many applications. The choice of technique depends on problem structure and the desired approximation-complexity tradeoff.