Streaming Algorithms

Overview

Streaming algorithms process massive data in a single pass (or few passes) using memory sublinear in the input size. The data stream model captures scenarios where data is too large to store: network traffic, sensor readings, database logs, web clicks.

Formal Model

- Input: stream of elements a_1, a_2, ..., a_m from universe [n] = {1, ..., n}

- Space: O(polylog(n, m)) or O(n^epsilon) — much less than m

- Passes: typically 1 (sometimes 2-3)

- Per-element processing time: O(polylog(n))

- Turnstile model: elements can be inserted or deleted (stream of updates to frequency vector)

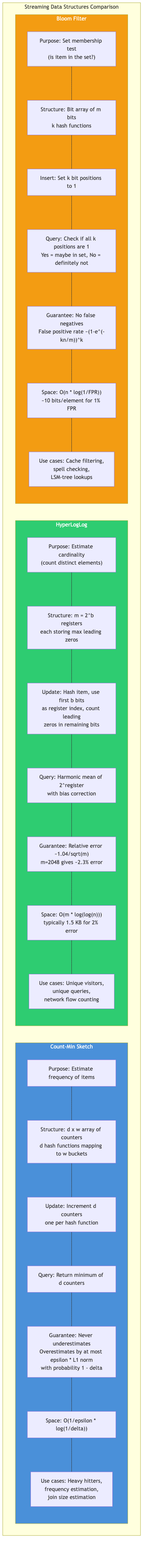

Count-Min Sketch

Estimates point queries (frequency of any element) in the turnstile model.

Structure

- d independent hash functions h_1, ..., h_d mapping [n] -> [w]

- d x w array of counters, initialized to 0

Update(item, count)

for j = 1 to d:

CMS[j][h_j(item)] += count

Query(item)

return min over j of CMS[j][h_j(item)]

Guarantees

- With d = ceil(ln(1/delta)), w = ceil(e/epsilon):

- f_hat(i) >= f(i) (never underestimates in insertion-only model)

- f_hat(i) <= f(i) + epsilon * ||f||_1 with probability >= 1 - delta

- Space: O((1/epsilon) * log(1/delta)) counters

Applications

- Network traffic monitoring (heavy hitter detection)

- Database query optimization (join size estimation)

- NLP (approximate word frequency counts)

Count Sketch

Better error bounds for skewed distributions than Count-Min Sketch.

Structure

- d hash functions h_j: [n] -> [w] and d sign functions s_j: [n] -> {-1, +1}

Update(item, count)

for j = 1 to d:

CS[j][h_j(item)] += s_j(item) * count

Query(item)

return median over j of (s_j(item) * CS[j][h_j(item)])

Guarantees

- |f_hat(i) - f(i)| <= epsilon * ||f||_2 with probability >= 1 - delta

- L2 guarantee is stronger than L1 for skewed distributions

- Space: O((1/epsilon^2) * log(1/delta))

HyperLogLog

Estimates the number of distinct elements (cardinality estimation).

Idea

Hash each element to a uniform binary string. The maximum number of leading zeros observed is an estimator of log2(distinct count).

Algorithm

- Use m = 2^b registers (b bits for bucket selection)

- For each element x, compute h(x). Use first b bits to select register j.

- Store max leading zeros of remaining bits in register[j]

- Estimate: alpha_m * m^2 / sum(2^(-register[j]))

Properties

- Space: O(m * log(log n)) bits, typically 1.5 KB for ~2% error

- Relative error: 1.04 / sqrt(m)

- Mergeable: union of two HyperLogLog sketches by taking element-wise max

- Used in Redis (PFADD/PFCOUNT), BigQuery, Presto

Corrections

- Small range correction: linear counting for small cardinalities

- Large range correction: for cardinalities near 2^32

Heavy Hitters

Find all elements with frequency >= epsilon * m (or epsilon * ||f||_1).

Misra-Gries Algorithm (Frequent)

Deterministic, 1/epsilon space.

Initialize: dictionary D (at most 1/epsilon - 1 entries)

For each element e:

if e in D: D[e] += 1

else if |D| < 1/epsilon - 1: D[e] = 1

else:

decrement all counters in D by 1

remove entries with count 0

- Every element with true frequency >= epsilon * m is in D

- Reported frequency is an underestimate; error <= epsilon * m

- Space: O(1/epsilon) counters

Space-Saving Algorithm

Replaces Misra-Gries's decrement-all with a single replacement.

Initialize: dictionary D (at most 1/epsilon entries)

For each element e:

if e in D: D[e] += 1

else if |D| < 1/epsilon: D[e] = 1

else:

find entry (e_min, c_min) with smallest count

replace with (e, c_min + 1)

- Same worst-case guarantees as Misra-Gries

- Better practical accuracy; provides both under- and over-estimates

- Can return top-k elements ordered by estimated frequency

Quantile Estimation

Estimate the phi-quantile: the element with rank ceil(phi * n) in the sorted stream.

GK Algorithm (Greenwald-Khanna)

- Deterministic, epsilon-approximate quantiles

- Space: O((1/epsilon) * log(epsilon * n))

- Maintains a sorted summary of (value, gap, delta) tuples

t-Digest (Dunning)

- Practical streaming quantile sketch

- Clusters data points with size limits based on quantile

- Clusters near the tails are kept small for accuracy at extreme percentiles

- Mergeable: important for distributed systems

- Used in Elasticsearch, Apache Spark

KLL Sketch (Karnin-Lang-Liberty)

- Near-optimal space: O((1/epsilon) * log(log(1/delta)))

- Randomized, mergeable

- Used in Apache DataSketches

Sliding Window Model

Compute statistics over only the most recent W elements of the stream.

Exponential Histograms (Datar-Gionis-Indyk-Motwani)

- Maintain counts in exponentially growing buckets

- Estimate sum of last W bits with (1+epsilon) factor

- Space: O((1/epsilon) * log^2 W)

Smooth Histograms

- Generalize exponential histograms to smooth functions

- Applicable to distinct count, frequency moments, quantiles

AMS Sketch (Alon-Matias-Szegedy)

Estimate frequency moments F_k = sum(f_i^k) where f_i is the frequency of element i.

F_2 Estimation (Second Frequency Moment)

Choose random hash s: [n] -> {-1, +1} (4-wise independent)

Maintain counter Z = sum over stream of s(a_t)

Output Z^2 as estimate of F_2

- E[Z^2] = F_2

- Var[Z^2] <= 2 * F_2^2 (with 4-wise independence)

- Average O(1/epsilon^2) independent copies, take median of O(log(1/delta)) groups

- Space: O((1/epsilon^2) * log(1/delta) * log n)

General F_k

- F_0 (distinct elements): O((1/epsilon^2) * log n) space — FM sketch, HyperLogLog

- F_1 (total count): trivially exact in O(1) space

- F_2: AMS sketch, O((1/epsilon^2) * log n) space

- F_k for k > 2: requires O(n^(1-2/k)) space (tight for k >= 3)

Johnson-Lindenstrauss Transform

Lemma: For any set of n points in R^d, there exists a map to R^k with k = O(log(n) / epsilon^2) such that all pairwise distances are preserved within (1 +/- epsilon).

Random Projection

Multiply by a k x d random matrix A (entries from N(0,1/k)):

- y = A * x

- Pr[| ||y||^2 - ||x||^2 | > epsilon * ||x||^2] <= 2 * exp(-k * epsilon^2 / 4)

Sparse JL Transforms

- Use sparse random matrices for faster projection: O(nnz) time instead of O(kd)

- Achlioptas: entries from {-1, 0, +1} with probabilities {1/6, 2/3, 1/6}

- CountSketch-based projections: O(d) time

Applications in Streaming

- Dimensionality reduction for distance-preserving sketches

- Approximate nearest neighbor search

- Compressed sensing and sparse recovery

Lower Bounds

Communication complexity provides streaming lower bounds:

| Problem | Space Lower Bound | Tight? |

|---|---|---|

| Distinct count | Omega(1/epsilon^2 + log n) | Yes |

| F_2 | Omega(1/epsilon^2 * log n) | ~Yes |

| F_k (k > 2) | Omega(n^(1-2/k)) | Yes |

| Median (exact) | Omega(n) | Yes |

| Heavy hitters | Omega(1/epsilon) | Yes |

Practical Considerations

- Mergeability: sketches that can be combined enable distributed computation

- Deletions: turnstile model sketches (Count-Min, AMS) handle deletions

- Sliding windows: require additional mechanisms beyond standard sketches

- Implementations: Apache DataSketches, Redis modules, stream-lib (Java)

Summary

Streaming algorithms enable real-time analytics on massive data using tiny memory. The key insight is that approximate answers with provable guarantees are sufficient for most applications. Sketches form the foundation: they are small, mergeable, and composable summaries that support a variety of queries.