Software-Defined Networking

SDN Architecture

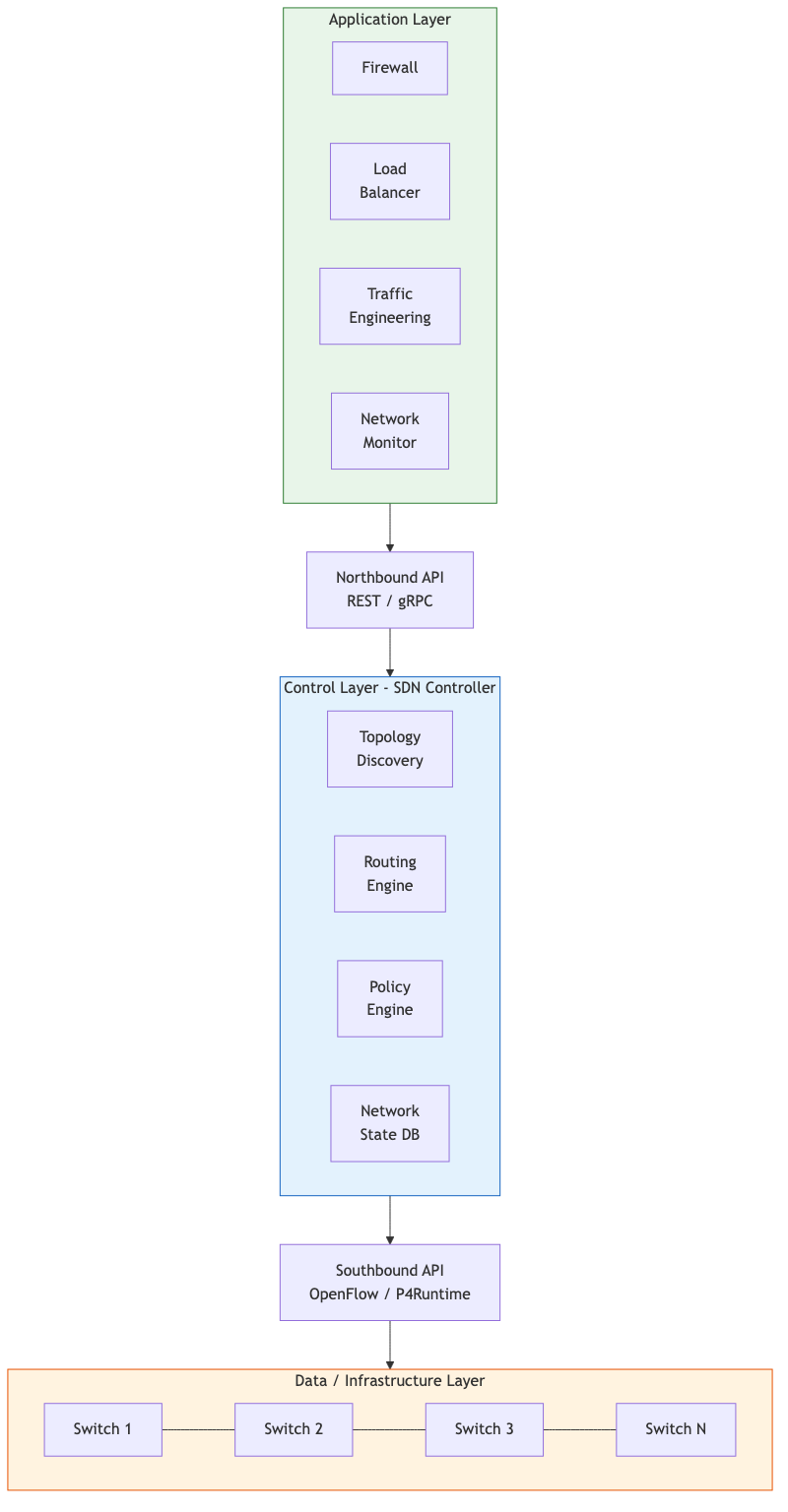

SDN decouples the control plane (routing decisions) from the data plane (packet forwarding), centralizing network intelligence in a software controller.

Three-Layer Model

+---------------------------+

| Application Layer | (network apps: firewall, load balancer, TE)

| Northbound API (REST) |

+---------------------------+

| Control Layer | (SDN controller / NOS)

| Southbound API (OF/P4) |

+---------------------------+

| Data/Infrastructure | (switches, routers - forwarding only)

+---------------------------+

Key Properties

| Property | Description |

|---|---|

| Centralized control | Logically centralized controller has global network view |

| Programmability | Network behavior defined in software, not device firmware |

| Open interfaces | Standardized APIs between layers |

| Abstraction | Applications operate on abstract network model |

Benefits and Challenges

Benefits:

- Rapid innovation in control logic without vendor firmware changes.

- Global optimization using complete topology and traffic knowledge.

- Simplified management through automation and programmability.

- Vendor-neutral forwarding hardware.

Challenges:

- Controller scalability and availability (single point of failure if not distributed).

- Latency of control plane interactions for reactive forwarding.

- Security of the controller (attractive attack target).

- Migration from legacy infrastructure.

OpenFlow

OpenFlow (v1.0 through v1.5) is the original and most widely known southbound protocol for SDN.

OpenFlow Switch Model

A switch contains one or more flow tables, each with flow entries:

Flow Entry:

Match Fields | Priority | Counters | Instructions | Timeouts | Cookie

Match fields (subset): ingress port, Ethernet src/dst/type, VLAN ID, IP src/dst, IP protocol, TCP/UDP src/dst port, MPLS label.

Instructions/Actions:

OUTPUT(port)- forward to a portDROP- discard packetSET_FIELD- modify header fieldsGROUP- apply group table entry (multicast, ECMP, failover)GOTO_TABLE(n)- continue processing at table n

Pipeline Processing

Packet In → Table 0 → Table 1 → ... → Table N → Execute Action Set

(match?) (match?) (match?)

↓ miss ↓ miss ↓ miss

Table-miss Table-miss Table-miss

entry entry entry

If no match and no table-miss entry: packet dropped. Table-miss can send to controller (Packet-In message).

OpenFlow Messages

| Category | Messages |

|---|---|

| Controller→Switch | Features Request, Flow Mod, Packet Out, Barrier |

| Switch→Controller | Packet In, Flow Removed, Port Status, Error |

| Symmetric | Hello, Echo Request/Reply |

OpenFlow Limitations

- Fixed match fields (not extensible without protocol revision).

- Limited programmability of the data plane itself.

- Performance overhead of Packet-In for unknown flows.

- Complexity of multi-table pipelines.

SDN Controllers

ONOS (Open Network Operating System)

- Designed for service provider and mission-critical networks.

- Distributed architecture using Atomix for distributed state management.

- Intent framework: applications express desired connectivity, ONOS computes forwarding rules.

- Written in Java; supports OpenFlow, gNMI, P4Runtime, NETCONF.

OpenDaylight (ODL)

- Modular, plugin-based architecture using OSGi/Karaf.

- Model-Driven Service Abstraction Layer (MD-SAL) for unified data model.

- Supports a wide range of southbound protocols.

- Strong enterprise and service provider ecosystem.

Comparison

| Feature | ONOS | OpenDaylight |

|---|---|---|

| Target | SP/carrier-grade | Enterprise/multi-protocol |

| Clustering | Built-in (Atomix/Raft) | Akka-based clustering |

| Intent framework | Core feature | Available via plugins |

| Community | ON.Lab / Linux Foundation | Linux Foundation |

| Data store | Distributed, strongly consistent | In-memory + persistence |

Controller Scalability

Distributed controllers partition the network among instances:

- Flat distribution: Each controller manages a subset of switches; synchronizes state.

- Hierarchical: Local controllers handle fast decisions; root controller handles global policy.

- Typical capacity: single instance handles 1-6 million flow setups/second (varies by implementation).

P4: Programming Protocol-Independent Packet Processors

P4 is a domain-specific language for programming the data plane, making the forwarding behavior itself programmable (not just the flow rules).

P4 Architecture

P4 Program → P4 Compiler → Target-specific binary

↓

Programmable Switch

(ASIC, FPGA, SmartNIC, software)

Core P4 Abstractions

// 1. Header definitions

header ipv4_t {

bit<4> version;

bit<4> ihl;

bit<8> tos;

bit<16> totalLen;

bit<16> identification;

bit<3> flags;

bit<13> fragOffset;

bit<8> ttl;

bit<8> protocol;

bit<16> hdrChecksum;

bit<32> srcAddr;

bit<32> dstAddr;

}

// 2. Parser (state machine)

parser MyParser(packet_in pkt, out headers hdr) {

state start {

pkt.extract(hdr.ethernet);

transition select(hdr.ethernet.etherType) {

0x0800: parse_ipv4;

default: accept;

}

}

state parse_ipv4 {

pkt.extract(hdr.ipv4);

transition accept;

}

}

// 3. Match-action tables

table ipv4_lpm {

key = { hdr.ipv4.dstAddr: lpm; }

actions = { forward; drop; }

size = 1024;

}

// 4. Control flow (apply tables)

apply { ipv4_lpm.apply(); }

// 5. Deparser (reassemble packet)

P4 vs. OpenFlow

| Aspect | OpenFlow | P4 |

|---|---|---|

| Header awareness | Fixed set of known headers | User-defined arbitrary headers |

| Forwarding model | Vendor-defined pipeline | Programmer-defined pipeline |

| New protocols | Requires spec update | Just write new parser/tables |

| Target | OpenFlow-compatible switches | Any programmable target |

Network Functions Virtualization (NFV)

NFV replaces dedicated network appliances (firewalls, load balancers, NATs) with software running on commodity servers.

NFV Architecture (ETSI)

+-------------------------------------------+

| OSS/BSS |

+-------------------------------------------+

| NFV Orchestrator (NFVO) |

| ├── VNF Manager (VNFM) |

| └── Virtual Infrastructure Manager (VIM)|

+-------------------------------------------+

| NFVI: compute, storage, network |

| (servers, switches, hypervisors) |

+-------------------------------------------+

- VNF (Virtual Network Function): Software implementation of a network function (e.g., vFirewall, vRouter).

- NFVI: Infrastructure that hosts VNFs.

- MANO: Management and Orchestration framework.

Performance Considerations

- Traditional kernel networking is too slow for VNFs at line rate.

- Solutions: DPDK (kernel bypass), SR-IOV (hardware-assisted I/O), SmartNICs.

- Container-based VNFs (CNFs) replacing VM-based for lower overhead.

Service Function Chaining (SFC)

SFC steers traffic through an ordered sequence of network functions.

Traffic → Classifier → Firewall → IDS → Load Balancer → Destination

(SF1) (SF2) (SF3)

NSH (Network Service Header) - RFC 8300

| NSH Base | Service Path | Service Index | Metadata |

(24-bit SPI) (8-bit SI)

- SPI (Service Path Identifier): Identifies the chain.

- SI (Service Index): Decremented at each SF; indicates position in chain.

- SFC-aware SFs process and decrement SI; SFC-unaware SFs use a proxy.

Intent-Based Networking (IBN)

IBN abstracts network configuration into high-level business intent, automating the translation to device-level configuration.

IBN Workflow

Intent: "Server A must be able to reach Database B on port 3306 with encrypted traffic"

↓

Translation Engine (decomposes intent into network policies)

↓

Activation (configures switches, firewalls, VPNs)

↓

Assurance (continuously monitors compliance, remediates drift)

Intent Levels

| Level | Example |

|---|---|

| Business | "Finance department traffic is isolated" |

| Service | "VLAN 100 has no path to VLAN 200 except via firewall" |

| Network | "ACL on switch X port 5 deny traffic from 10.0.1.0/24 to 10.0.2.0/24" |

Network Verification

Formal methods applied to ensure network correctness.

Approaches

| Tool/Approach | Method | Checks |

|---|---|---|

| Header Space Analysis (HSA) | Models forwarding as geometric operations on header space | Reachability, loops, isolation |

| Veriflow | Real-time verification of rule insertions | Invariant violations on updates |

| Batfish | Configuration analysis (offline) | Reachability, routing correctness |

| Minesweeper | SMT-based analysis of control plane | Policy compliance under all failures |

| P4v | Verification of P4 programs | Data plane program correctness |

Data Plane vs. Control Plane Verification

- Data plane: Verify the forwarding state at a point in time (fast, snapshot-based).

- Control plane: Verify that routing protocols produce correct forwarding under all possible failures (more thorough, computationally expensive).

Practical Verification Checks

- Reachability: Can host A reach host B?

- Isolation: Can VLAN X traffic never reach VLAN Y?

- Loop freedom: Are there any forwarding loops?

- Black holes: Are any destinations unreachable?

- Waypointing: Does traffic always traverse a required middlebox?

- Equivalence: Do two configurations produce identical forwarding behavior?