Generative Models

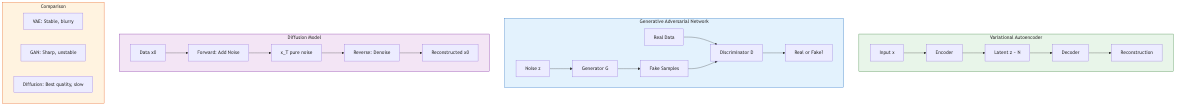

Variational Autoencoders (VAE)

Architecture and Objective

A VAE (Kingma and Welling, 2014) consists of an encoder qφ(z|x) that maps data x to a distribution over latent variables z, and a decoder pθ(x|z) that maps latent samples back to data space. The objective maximizes a lower bound on the log-likelihood:

ELBO (Evidence Lower Bound):

log p(x) ≥ E_{q_φ(z|x)}[log p_θ(x|z)] - D_KL(q_φ(z|x) || p(z))

- Reconstruction term: E[log pθ(x|z)] — measures how well the decoder reconstructs x from z. Encourages accurate generation.

- KL regularization: D_KL(qφ(z|x) || p(z)) — pulls the approximate posterior toward the prior p(z) (typically N(0,I)). Encourages a smooth, structured latent space.

The gap between log p(x) and the ELBO equals D_KL(qφ(z|x) || pθ(z|x)) — the approximation error of the variational posterior.

Reparameterization Trick

Backpropagation through stochastic sampling z ~ qφ(z|x) requires the reparameterization trick: express z = μφ(x) + σφ(x) ⊙ ε, where ε ~ N(0,I). The stochasticity is moved to ε, and the gradient flows through μ and σ deterministically. This enables end-to-end training via standard backpropagation.

Posterior Collapse

A failure mode where the encoder ignores the input and produces qφ(z|x) ≈ p(z) for all x. The decoder then ignores z and models p(x) autoregressively. The KL term is zero (good) but the latent space is unused. Mitigations: KL annealing (gradually increase KL weight from 0 to 1), free bits (minimum KL per dimension), aggressive decoder capacity limitation, δ-VAE.

VQ-VAE (Vector Quantized VAE)

Van den Oord et al. (2017) replace the continuous latent space with a discrete codebook. The encoder outputs a continuous vector, which is quantized to the nearest codebook entry:

z_q = argmin_{e_k ∈ codebook} ||z_e - e_k||²

Straight-through estimator: Gradients pass through the quantization step unchanged to the encoder. The codebook is updated via an exponential moving average of encoder outputs (or by the VQ loss: ||sg[z_e] - e||² + β||z_e - sg[e]||²).

VQ-VAE avoids posterior collapse (discrete bottleneck forces information through z) and produces high-quality discrete representations. VQ-VAE-2 adds hierarchical quantization for high-resolution image generation.

Generative Adversarial Networks (GAN)

Minimax Formulation

A GAN (Goodfellow et al., 2014) trains a generator G and a discriminator D in a two-player minimax game:

min_G max_D E_{xp_data}[log D(x)] + E_{zp_z}[log(1 - D(G(z)))]

The discriminator distinguishes real from generated samples; the generator produces samples to fool the discriminator. At the Nash equilibrium, G produces the data distribution and D outputs 1/2 everywhere.

Training Instabilities

GAN training is notoriously unstable: mode collapse (generator produces limited variety), vanishing gradients (when D is too strong, G receives no useful gradient signal), oscillation (G and D cycle without converging).

Wasserstein GAN (WGAN)

Arjovsky et al. (2017) replace the JS divergence with the Wasserstein-1 (Earth Mover's) distance:

W(p_data, p_g) = sup_{||f||L ≤ 1} E{xp_data}[f(x)] - E_{xp_g}[f(x)]

The critic f (replacing D) must be 1-Lipschitz. Originally enforced via weight clipping; WGAN-GP (Gulwani et al., 2017) uses a gradient penalty: λ E_{x̂}[(||∇_x̂ f(x̂)|| - 1)²]. WGAN provides meaningful loss curves (W correlates with sample quality) and more stable training.

StyleGAN

Karras et al. (2019, 2020, 2021) introduced style-based generators:

- A mapping network transforms latent z to intermediate style w = f(z) in a learned style space W.

- The synthesis network generates images from a constant input, modulated at each layer by adaptive instance normalization (AdaIN) using style w.

- Style mixing: Different layers receive styles from different latent codes, controlling coarse (pose, shape) and fine (texture, color) attributes independently.

StyleGAN2 eliminates artifacts (blob-like features) via weight demodulation. StyleGAN3 addresses texture sticking (features that do not transform with camera motion) via continuous equivariance constraints.

Normalizing Flows

Concept

A normalizing flow transforms a simple base distribution (e.g., Gaussian) through a sequence of invertible, differentiable transformations f₁, f₂, ..., fₖ. The output distribution is:

log p(x) = log p_z(z) - Σᵢ log |det ∂fᵢ/∂fᵢ₋₁|

where z = f_k⁻¹ ∘ ... ∘ f_1⁻¹(x). Exact likelihood computation is possible (unlike VAEs, which only give a bound, and GANs, which have no likelihood at all).

RealNVP (Real-valued Non-Volume Preserving)

Dinh et al. (2017) use affine coupling layers: split the input into two halves (x₁, x₂). Transform one conditioned on the other:

y₁ = x₁ y₂ = x₂ ⊙ exp(s(x₁)) + t(x₁)

where s and t are arbitrary neural networks. The Jacobian is triangular with determinant exp(Σ s(x₁)ᵢ) — cheap to compute. Invertible: x₂ = (y₂ - t(y₁)) ⊙ exp(-s(y₁)).

Glow

Kingma and Dhariwal (2018) extend RealNVP with:

- 1×1 invertible convolutions: Replace fixed permutations between coupling layers with learnable permutations (parameterized as LU-decomposed matrices).

- Actnorm: Data-dependent initialization of affine scaling parameters.

- Multi-scale architecture: Progressively reduce spatial dimensions.

Glow achieves high-quality image generation with exact likelihoods and enables meaningful latent space interpolation and attribute manipulation.

Diffusion Models

DDPM (Denoising Diffusion Probabilistic Models)

Ho et al. (2020) formalize diffusion as a forward process that gradually adds Gaussian noise to data over T steps, and a learned reverse process that denoises.

Forward process: q(xₜ|xₜ₋₁) = N(xₜ; √(1-βₜ)xₜ₋₁, βₜI). After T steps, xₜ ≈ N(0,I). The marginal is: q(xₜ|x₀) = N(xₜ; √ᾱₜ x₀, (1-ᾱₜ)I), where ᾱₜ = Πᵢ₌₁ᵗ (1-βᵢ).

Reverse process: pθ(xₜ₋₁|xₜ) = N(xₜ₋₁; μθ(xₜ,t), σₜ²I). The model predicts the noise εθ(xₜ,t) added at step t. Simplified loss: L = E_{t,x₀,ε}[||ε - εθ(√ᾱₜ x₀ + √(1-ᾱₜ)ε, t)||²]. This is a weighted denoising score matching objective.

Architecture: U-Net with residual blocks, self-attention at lower resolutions, sinusoidal time embeddings. Cross-attention for conditioning (text, class labels).

Score Matching and SDE Perspective

Song et al. (2021) unify diffusion models as stochastic differential equations (SDEs). The forward process is an SDE: dx = f(x,t)dt + g(t)dw. The reverse process is another SDE: dx = [f(x,t) - g(t)² ∇_x log pₜ(x)]dt + g(t)dw̄.

The score function ∇_x log pₜ(x) is approximated by a neural network sθ(x,t) trained via denoising score matching: match sθ to the score of the noisy distribution. This framework generalizes DDPM (variance-exploding, variance-preserving SDEs are special cases) and enables probability flow ODE: a deterministic ODE with the same marginals as the SDE, enabling exact likelihood computation.

DDIM (Denoising Diffusion Implicit Models)

Song et al. (2021) show that the DDPM training objective is compatible with non-Markovian reverse processes. DDIM defines a deterministic reverse process (σ=0 case) that uses the same trained model but generates samples in fewer steps (10-50 instead of 1000). Trades stochasticity for speed.

Classifier-Free Guidance

Ho and Salimans (2022) combine conditional and unconditional score estimates: ε̃ = (1+w)εθ(xₜ,t,c) - w·εθ(xₜ,t,∅), where c is the conditioning signal and w is the guidance scale. Higher w increases faithfulness to the condition at the cost of diversity. No separate classifier needed (unlike classifier guidance). This technique is essential for high-quality text-to-image generation.

Latent Diffusion Models (LDM) / Stable Diffusion

Rombach et al. (2022) run the diffusion process in the latent space of a pretrained autoencoder rather than pixel space. A VQ-VAE or KL-VAE compresses images to a lower-dimensional latent space (e.g., 64×64×4 vs. 512×512×3). The diffusion U-Net operates on latents, with cross-attention layers for text conditioning (using CLIP text embeddings).

Advantages: Dramatically reduces computational cost (latent space is 48x smaller than pixel space). Enables high-resolution generation (512×512, 1024×1024) on consumer GPUs. Foundation for Stable Diffusion, DALL-E 2/3, Imagen, Midjourney.

Consistency Models

Song et al. (2023) train models to map any noisy sample xₜ directly to x₀ in a single step, learning the consistency function of the probability flow ODE. Enables one-step or few-step generation without iterative denoising, while maintaining quality competitive with multi-step diffusion.

Flow Matching

Lipman et al. (2023) train continuous normalizing flows by regressing on a velocity field vθ(x,t) that transports samples from noise to data. Uses simple conditional flow paths (e.g., optimal transport paths: linear interpolation between noise and data). Simpler training objective than score matching, often faster convergence. Foundation for Stable Diffusion 3 and modern diffusion architectures.

Comparison of Generative Paradigms

| Property | VAE | GAN | Flow | Diffusion |

|---|---|---|---|---|

| Likelihood | Lower bound | None | Exact | Exact (via ODE) |

| Sample quality | Good | Excellent | Good | Excellent |

| Training stability | Stable | Unstable | Stable | Stable |

| Sampling speed | Fast (one pass) | Fast (one pass) | Fast (one pass) | Slow (many steps) |

| Mode coverage | Good | Mode collapse risk | Good | Excellent |

| Latent space | Structured | Entangled | Structured | Implicit |

Applications

- Image generation/editing: Inpainting, outpainting, super-resolution, style transfer, image-to-image translation (pix2pix, CycleGAN).

- Text-to-image: DALL-E, Stable Diffusion, Imagen, Midjourney.

- Video generation: Video diffusion models (Sora, Runway Gen-2), autoregressive video models.

- 3D generation: Score distillation sampling (DreamFusion), 3D-aware GANs (EG3D).

- Molecular design: Diffusion models for protein structure generation, drug discovery.

- Audio synthesis: WaveNet, DiffWave, AudioLDM.