Vectors and Spaces

Linear algebra is the mathematics of vectors and linear transformations. It is arguably the most useful branch of mathematics for computer science — underpinning machine learning, computer graphics, signal processing, and scientific computing.

Vectors

A vector is an ordered list of numbers. An n-dimensional vector has n components:

v = (v₁, v₂, ..., vₙ) ∈ ℝⁿ

Vectors can represent:

- Points in space (position)

- Directions and magnitudes (displacement, velocity, force)

- Data (feature vectors in ML, pixel values, audio samples)

- States (probability distributions, quantum states)

Column vs Row Vectors

By convention, vectors are column vectors:

v = [v₁] vᵀ = [v₁ v₂ v₃] (row vector, the transpose)

[v₂]

[v₃]

Vector Operations

Addition: Component-wise.

u + v = (u₁ + v₁, u₂ + v₂, ..., uₙ + vₙ)

Scalar multiplication: Scale each component.

cv = (cv₁, cv₂, ..., cvₙ)

Linear combination: c₁v₁ + c₂v₂ + ... + cₖvₖ.

Dot product (inner product):

u · v = u₁v₁ + u₂v₂ + ... + uₙvₙ = uᵀv

Vector Spaces

A vector space V over a field F (typically ℝ or ℂ) is a set with two operations (addition and scalar multiplication) satisfying:

Axioms

Addition axioms:

- Closure: u + v ∈ V

- Commutativity: u + v = v + u

- Associativity: (u + v) + w = u + (v + w)

- Zero vector: ∃ 0 ∈ V such that v + 0 = v

- Additive inverse: ∀v, ∃(-v) such that v + (-v) = 0

Scalar multiplication axioms: 6. Closure: cv ∈ V 7. Distributive (scalar): c(u + v) = cu + cv 8. Distributive (vector): (c + d)v = cv + dv 9. Associative: c(dv) = (cd)v 10. Identity: 1v = v

Examples of Vector Spaces

| Space | Elements | Scalars |

|---|---|---|

| ℝⁿ | n-tuples of reals | ℝ |

| ℂⁿ | n-tuples of complex numbers | ℂ |

| Mₘₓₙ(ℝ) | m × n real matrices | ℝ |

| Pₙ(ℝ) | Polynomials of degree ≤ n | ℝ |

| C([a,b]) | Continuous functions on [a,b] | ℝ |

| {0} | Zero vector only | Any field |

Non-Examples

- ℤⁿ over ℝ: Not closed under scalar multiplication (½ · (1,0) = (0.5, 0) ∉ ℤ²).

- ℝ² with component-wise multiplication as "addition": Fails additive inverse.

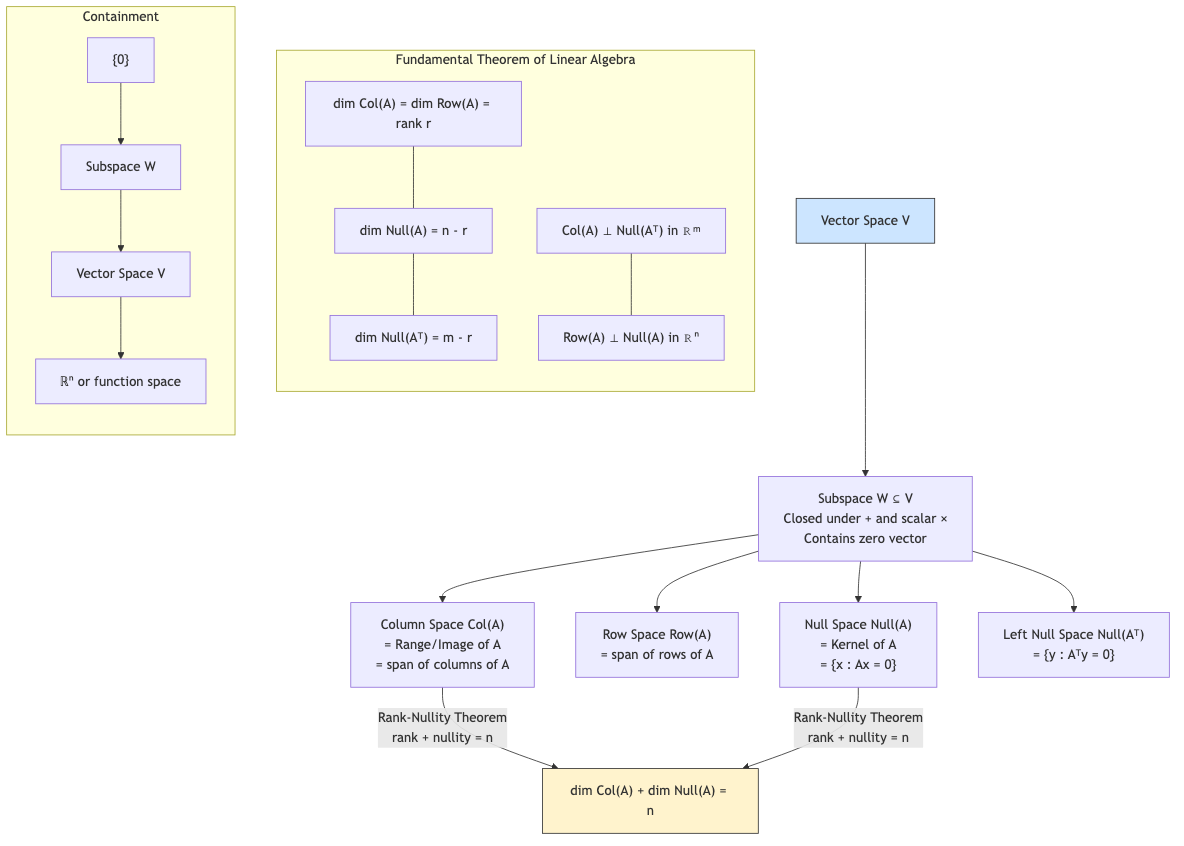

Subspaces

A subspace W of vector space V is a subset that is itself a vector space under the same operations.

Subspace Test

W ⊆ V is a subspace iff:

- Non-empty: 0 ∈ W (or equivalently, W ≠ ∅)

- Closed under addition: u, v ∈ W ⟹ u + v ∈ W

- Closed under scalar multiplication: v ∈ W, c ∈ F ⟹ cv ∈ W

Or equivalently (combined): W ≠ ∅ and au + bv ∈ W for all u, v ∈ W, a, b ∈ F.

Examples of subspaces of ℝ³:

- {0} (the zero subspace)

- Any line through the origin

- Any plane through the origin

- ℝ³ itself

Non-subspaces: A line not through the origin (fails 0 ∈ W). The first quadrant of ℝ² (not closed under scalar multiplication by negatives).

Span

The span of vectors v₁, v₂, ..., vₖ is the set of all linear combinations:

span{v₁, v₂, ..., vₖ} = {c₁v₁ + c₂v₂ + ... + cₖvₖ | cᵢ ∈ F}

The span is always a subspace.

A set of vectors spans V if span{v₁, ..., vₖ} = V.

Example: span{(1,0), (0,1)} = ℝ². span{(1,0)} = x-axis. span{(1,2), (2,4)} = a line (the vectors are parallel).

Linear Independence

Vectors v₁, v₂, ..., vₖ are linearly independent if:

c₁v₁ + c₂v₂ + ... + cₖvₖ = 0 ⟹ c₁ = c₂ = ... = cₖ = 0

The only linear combination giving the zero vector is the trivial one.

Linearly dependent: Not linearly independent — at least one vector can be written as a linear combination of the others.

Testing Linear Independence

Form a matrix with the vectors as columns and row-reduce. The vectors are independent iff the matrix has a pivot in every column.

Example: Are (1,2,3), (4,5,6), (7,8,9) independent?

[1 4 7] [1 4 7] [1 4 7]

[2 5 8] → [0 -3 -6] → [0 -3 -6]

[3 6 9] [0 -6 -12] [0 0 0]

Only 2 pivots, so they are linearly dependent. Indeed: (7,8,9) = 2(4,5,6) - (1,2,3).

Key Facts

- In ℝⁿ, at most n vectors can be linearly independent.

- Any set containing the zero vector is linearly dependent.

- A single non-zero vector is linearly independent.

- Two vectors are dependent iff one is a scalar multiple of the other.

Basis

A basis for vector space V is a set of vectors that is both:

- Linearly independent

- Spanning (span = V)

A basis is a minimal spanning set and a maximal independent set.

Standard Basis

For ℝⁿ, the standard basis is:

e₁ = (1, 0, ..., 0)

e₂ = (0, 1, ..., 0)

⋮

eₙ = (0, 0, ..., 1)

Every vector v = (v₁, v₂, ..., vₙ) = v₁e₁ + v₂e₂ + ... + vₙeₙ.

Existence and Uniqueness

- Every vector space has a basis (requires Axiom of Choice for infinite-dimensional spaces).

- Bases are not unique, but every basis has the same number of elements.

Dimension

The dimension of V, written dim(V), is the number of vectors in any basis.

dim(ℝⁿ) = n

dim(Mₘₓₙ) = mn

dim(Pₙ) = n + 1 (basis: {1, x, x², ..., xⁿ})

dim({0}) = 0

Dimension Theorem

If W is a subspace of V:

- dim(W) ≤ dim(V)

- dim(W) = dim(V) iff W = V

If V = W₁ + W₂ (sum of subspaces):

dim(W₁ + W₂) = dim(W₁) + dim(W₂) - dim(W₁ ∩ W₂)

Coordinate Systems

Given a basis B = {v₁, v₂, ..., vₙ} for V, every vector v ∈ V has a unique representation:

v = c₁v₁ + c₂v₂ + ... + cₙvₙ

The coordinate vector of v with respect to B is:

[v]_B = (c₁, c₂, ..., cₙ)

Change of Basis

If B and B' are two bases for V, the change of basis matrix P from B to B' satisfies:

[v]_{B'} = P [v]_B

P is the matrix whose columns are the B-coordinates of the B' basis vectors. Alternatively, P = [I]_{B'}^B.

Properties:

- P is always invertible.

- P⁻¹ is the change of basis from B' to B.

- If A represents a linear transformation in basis B, then P⁻¹AP represents it in basis B'.

Example: B = {(1,0), (0,1)}, B' = {(1,1), (1,-1)}.

To find P: Express B' vectors in B-coordinates (they already are, since B is standard).

P = [1 1] P⁻¹ = ½[1 1]

[1 -1] [1 -1]

Vector v = (3, 1) in standard basis: [v]_{B'} = P⁻¹(3,1) = ½(4, 2) = (2, 1). Verify: 2(1,1) + 1(1,-1) = (3, 1). ✓

Direct Sum

V is the direct sum of subspaces W₁ and W₂ (written V = W₁ ⊕ W₂) if:

- V = W₁ + W₂ (every v ∈ V can be written as w₁ + w₂)

- W₁ ∩ W₂ = {0}

Equivalently: every v ∈ V has a unique decomposition v = w₁ + w₂.

dim(W₁ ⊕ W₂) = dim(W₁) + dim(W₂).

Real-World Applications

- Machine learning: Feature vectors live in ℝⁿ. PCA finds a low-dimensional subspace capturing most variance. Word embeddings map words to vectors in ℝ³⁰⁰.

- Computer graphics: Points and directions in 3D space. Homogeneous coordinates use ℝ⁴.

- Signal processing: Signals are vectors in function spaces. Fourier basis decomposes signals into frequencies.

- Quantum computing: Quantum states are vectors in ℂ²ⁿ (Hilbert space). Measurements project onto subspaces.

- Data compression: Represent data in a lower-dimensional subspace (SVD, PCA).

- Error-correcting codes: Codewords form a subspace. Syndrome decoding projects onto cosets.

- Robotics: Configuration spaces, joint angle vectors, workspace analysis.