Text Preprocessing

Overview

Text preprocessing transforms raw text into a structured representation suitable for downstream NLP tasks. The choices made at this stage profoundly affect model performance, vocabulary size, and the ability to handle out-of-vocabulary words.

Tokenization

Tokenization splits text into discrete units (tokens) that serve as input to models.

Word Tokenization

The simplest approach splits on whitespace and punctuation.

| Challenge | Example |

|---|---|

| Contractions | "don't" -> "do" + "n't"? |

| Hyphenation | "state-of-the-art" -> one or four tokens? |

| Multiword expressions | "New York" is one concept |

| Agglutinative languages | Turkish, Finnish words encode entire phrases |

Rule-based tokenizers (Penn Treebank, Moses) use regex cascades. SpaCy uses language-specific rules plus statistical models.

Subword Tokenization

Subword methods balance vocabulary size against token granularity, eliminating the out-of-vocabulary problem.

Byte Pair Encoding (BPE)

- Start with a character-level vocabulary

- Count all adjacent symbol pairs in the corpus

- Merge the most frequent pair into a new symbol

- Repeat for a fixed number of merge operations (typically 30k-50k)

BPE is used by GPT-2/3/4 and RoBERTa. It is deterministic at inference given a learned merge table.

WordPiece

- Similar to BPE but selects merges that maximize likelihood of the training corpus

- Merges the pair that maximizes P(merged) / (P(first) * P(second))

- Used by BERT and DistilBERT

- Prefixes continuation tokens with

##(e.g., "playing" -> "play" + "##ing")

Unigram Language Model

- Starts with a large vocabulary and iteratively removes tokens whose removal least decreases corpus likelihood

- Each segmentation has a probability; can sample or find the Viterbi-best segmentation

- Used by XLNet, ALBERT, T5

SentencePiece

- Language-agnostic framework that treats input as a raw byte stream (no pre-tokenization)

- Implements both BPE and Unigram algorithms

- Handles any language without whitespace-based pre-splitting (critical for Chinese, Japanese, Thai)

- Supports byte-fallback to handle any Unicode character

Comparison

| Method | Vocabulary Source | Merge Criterion | Notable Users |

|---|---|---|---|

| BPE | Bottom-up merging | Frequency | GPT family |

| WordPiece | Bottom-up merging | Likelihood gain | BERT |

| Unigram | Top-down pruning | Likelihood loss | T5, XLNet |

| SentencePiece | Framework (BPE/Unigram) | Depends on algorithm | LLaMA, T5 |

Text Normalization

Case Folding

Lowercasing reduces vocabulary but destroys information (e.g., "US" vs "us"). Modern models typically preserve case and let the model learn case sensitivity.

Unicode Normalization and UTF-8

Unicode assigns codepoints to characters; UTF-8 encodes them in 1-4 bytes.

- NFC (Canonical Composition): combines decomposed characters ("e" + combining accent -> "e")

- NFKC (Compatibility Composition): also normalizes compatibility variants (ligatures, width forms)

- Always normalize to a consistent form before tokenization to avoid duplicate vocabulary entries

Stemming

Reduces words to a stem by stripping suffixes heuristically.

- Porter Stemmer: rule-based suffix stripping (e.g., "running" -> "run", "studies" -> "studi")

- Snowball Stemmer: improved Porter with multilingual support

- Fast but crude; produces non-words ("studies" -> "studi")

Lemmatization

Maps words to their dictionary form (lemma) using morphological analysis.

- "better" -> "good", "ran" -> "run", "mice" -> "mouse"

- Requires POS information for disambiguation ("saw" noun vs verb)

- WordNet lemmatizer, SpaCy lemmatizer, Stanza

- More accurate than stemming but slower

Stemming vs Lemmatization

| Aspect | Stemming | Lemmatization |

|---|---|---|

| Output | May be non-word | Always valid word |

| Speed | Fast | Slower |

| Context needed | No | Often (POS tag) |

| Use case | IR, search indexing | Text understanding tasks |

Stop Word Removal

Stop words are high-frequency, low-information words (the, is, at, of). Removing them reduces dimensionality for bag-of-words models.

Caveats:

- Negation words ("not", "no") carry critical sentiment information

- Phrases depend on stop words ("to be or not to be")

- Modern neural models generally do not remove stop words; the model learns to ignore them

- Custom stop word lists per domain often outperform generic lists

Sentence Segmentation

Splitting text into sentences is harder than it appears.

| Challenge | Example |

|---|---|

| Abbreviations | "Dr. Smith went to Washington." |

| Ellipsis | "Wait... what?" |

| Decimal numbers | "The price is 3.50 dollars." |

| Quoted speech | She said, "Hello." He nodded. |

Approaches:

- Rule-based: Punkt tokenizer (unsupervised abbreviation detection)

- ML-based: binary classifier on each period (features: word length, case, abbreviation lists)

- Neural: SpaCy's sentence segmenter, Stanza

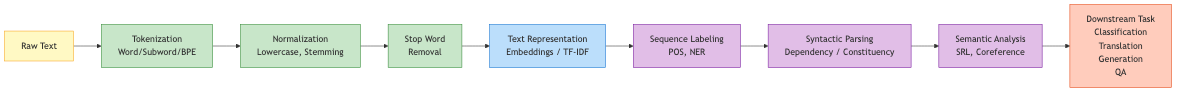

Preprocessing Pipelines

A typical pipeline applies steps in order. The exact steps depend on the task and model.

Raw Text

-> Unicode normalization (NFKC)

-> Sentence segmentation

-> Tokenization (subword for neural, word-level for classical)

-> Optional: lowercasing, stop word removal, stemming/lemmatization

-> Numericalization (token -> integer ID)

Classical NLP Pipeline (e.g., for TF-IDF + SVM)

- Lowercase

- Remove punctuation and special characters

- Tokenize (word-level)

- Remove stop words

- Stem or lemmatize

- Build vocabulary and vectorize

Modern Neural Pipeline (e.g., for BERT)

- Unicode normalize

- Apply pretrained tokenizer (WordPiece) -- handles casing, subwords

- Add special tokens ([CLS], [SEP])

- Convert to IDs and create attention masks

- No stop word removal, no stemming

Practical Considerations

Whitespace and Noise

- HTML tags, URLs, email addresses, @mentions, hashtags all need task-specific handling

- Regular expressions for cleaning must be carefully ordered

Multilingual Text

- Language detection (fastText lid, CLD3) before language-specific processing

- Transliteration for code-switched text

- Shared subword vocabularies (SentencePiece) enable multilingual models

Reproducibility

- Pin tokenizer versions; vocabulary changes break model compatibility

- Document normalization choices; NFKC vs NFC matters for reproducibility

- Store tokenizer artifacts alongside model checkpoints

Key Takeaways

- Subword tokenization (BPE, WordPiece, Unigram) has largely replaced word-level tokenization in neural NLP

- SentencePiece enables language-agnostic preprocessing by operating on raw bytes

- Classical preprocessing (stemming, stop words) is still relevant for bag-of-words and search applications

- Modern pretrained models handle most normalization internally; minimal preprocessing is preferred

- Unicode normalization is essential for consistent tokenization across different text sources