Memory Management

Memory management determines how programs allocate, use, and free memory. The choice of strategy profoundly affects performance, safety, and developer experience.

Stack vs Heap

Stack

LIFO allocation. Each function call pushes a stack frame; return pops it.

Stack:

┌──────────────────┐

│ bar() locals │ ← SP (stack pointer)

├──────────────────┤

│ foo() locals │

├──────────────────┤

│ main() locals │

└──────────────────┘

Properties:

- Allocation/deallocation: O(1) — just move the stack pointer.

- Automatically freed when function returns.

- Fixed size (typically 1-8 MB per thread).

- LIFO constraint: can't free memory below the top of stack.

- Data must have known size at compile time.

Heap

Dynamic allocation. Memory allocated and freed in any order.

Properties:

- Allocation: O(1) amortized to O(log n) depending on allocator.

- Must be explicitly freed (or garbage collected).

- Size limited by available RAM.

- No ordering constraint.

- Supports runtime-determined sizes (dynamic arrays, objects).

// Stack allocation

x ← 42 // on stack

// Heap allocation

y ← HEAP_ALLOC(42) // on heap

v ← [1, 2, 3] // buffer on heap

Manual Memory Management

malloc/free (C)

int *arr = (int *)malloc(100 * sizeof(int));

// use arr...

free(arr);

arr = NULL; // good practice

Problems:

- Memory leaks: Forgetting to call free.

- Double free: Freeing the same memory twice → undefined behavior.

- Use-after-free: Accessing memory after freeing → undefined behavior.

- Buffer overflow: Writing past allocated bounds.

- Dangling pointers: Pointer to freed memory.

RAII (Resource Acquisition Is Initialization)

C++ and Rust approach: Tie resource lifetime to object scope. When the object goes out of scope, its destructor frees the resource.

BEGIN BLOCK

v ← [1, 2, 3] // allocates on heap

// use v...

END BLOCK // v goes out of scope -> destructor runs -> heap memory freed

// No manual free needed. No leaks possible (in safe code).

{

std::vector<int> v = {1, 2, 3}; // allocates on heap

// use v...

} // destructor runs → memory freed

Advantages: Deterministic deallocation. No GC pauses. Resource management (files, sockets, locks) works the same way.

Garbage Collection

Automatically reclaim memory that is no longer reachable.

Mark-and-Sweep

- Mark phase: Starting from roots (stack, globals), traverse all reachable objects and mark them.

- Sweep phase: Scan the heap. Free unmarked (unreachable) objects.

Roots: [A, B]

A → C → D

B → C

After mark: A, B, C, D marked (reachable)

E, F unmarked → freed

Advantages: Handles cycles. Simple. Disadvantages: Stop-the-world pauses. Fragmentation.

Copying Collector

Divide heap into two semi-spaces. Copy live objects from one to the other.

From-space: [A][garbage][B][garbage][C]

To-space: [A][B][C][ ]

Advantages: No fragmentation (objects are compacted). Allocation is O(1) (bump pointer). Disadvantages: Wastes half the memory. Copies all live data (expensive if most data is live).

Generational GC

Observation: Most objects die young (generational hypothesis). Focus GC effort on young objects.

Eden (Young Gen) → Survivor → Old Gen (Tenured)

(frequent GC) (infrequent GC)

Minor GC: Collect the young generation. Fast (most young objects are garbage). Stop-the-world but brief.

Major GC: Collect the old generation. Slow (lots of live data). Longer pauses.

Used by: JVM (G1, ZGC, Shenandoah), .NET, Go, V8 (JavaScript).

Concurrent GC

Run GC concurrently with the application to reduce pauses.

G1 (Garbage-First): JVM default. Divides heap into regions. Collects regions with most garbage first. Pause targets (e.g., 200ms).

ZGC (Java 11+): Sub-millisecond pauses regardless of heap size. Uses colored pointers and load barriers.

Shenandoah (Java 12+): Similar goals to ZGC. Concurrent compaction.

Go GC: Concurrent, generational since Go 1.5. Very low pauses (<1ms typical).

Incremental GC

Break GC work into small increments interleaved with application execution. Reduces worst-case pause time.

Reference Counting

Each object tracks how many references point to it. When count reaches zero, free immediately.

a ← REF_COUNTED([1, 2, 3]) // refcount = 1

b ← CLONE_REF(a) // refcount = 2

DROP(b) // refcount = 1

DROP(a) // refcount = 0 -> freed

Advantages: Deterministic deallocation (immediate when last reference is dropped). No pauses. Simple.

Disadvantages: Cannot handle cycles (A → B → A, both have refcount ≥ 1 forever → leak). Per-operation overhead (increment/decrement on every copy/drop). Thread-safety requires atomic operations (Arc in Rust).

Cycle Detection

Weak references: Break cycles by using non-owning references that don't prevent deallocation.

CLASS Node

parent: WEAK_REF(Node) or NIL // weak: doesn't prevent parent from being freed

children: list of REF_COUNTED(Node) // strong: keeps children alive

Tracing + refcounting: Python uses reference counting + periodic cycle detection (mark-and-sweep on the cycle detector).

Ownership and Borrowing (Rust Model)

Rust's unique approach: compile-time memory management with no GC.

Ownership Rules

- Each value has exactly one owner.

- When the owner goes out of scope, the value is dropped (freed).

- Ownership can be moved (transferred) but not duplicated (unless the type implements Copy).

s1 ← "hello"

s2 ← MOVE(s1) // s1 is MOVED to s2. s1 is no longer valid.

// PRINT s1 // COMPILE ERROR: value moved

PRINT s2 // OK

Borrowing

References (&T, &mut T) borrow values without taking ownership.

Rules:

- Any number of shared references (&T) simultaneously, OR

- Exactly one mutable reference (&mut T), but not both.

s ← "hello"

r1 ← SHARED_REF(s) // shared borrow

r2 ← SHARED_REF(s) // another shared borrow — OK

// r3 ← MUTABLE_REF(s) // COMPILE ERROR: can't borrow mutably while shared borrows exist

PRINT r1, " ", r2

// r1, r2 no longer used after this point

r3 ← MUTABLE_REF(s) // OK now — shared borrows are done

APPEND " world" TO r3

Lifetimes

Compile-time annotations ensuring references don't outlive the data they point to.

FUNCTION LONGEST(x, y) -> reference

// Lifetime annotation: returned reference lives as long as the shorter of x and y

IF length(x) > length(y) THEN RETURN x

ELSE RETURN y

Lifetime elision: The compiler infers lifetimes in common cases. Explicit annotations needed only for ambiguous situations.

What Rust Prevents (at compile time)

- Use-after-free: Ownership ensures data lives long enough.

- Double free: Only one owner → only one drop.

- Data races: &mut exclusivity prevents concurrent mutation.

- Null pointer dereference: No null — use Option

. - Dangling pointers: Lifetimes prevent references from outliving data.

Arena Allocation

Allocate many objects from a pool (arena). Free them all at once when the arena is dropped.

// Conceptual arena

CLASS Arena

FIELDS: chunks (list of memory blocks), current (index)

FUNCTION ALLOC(val)

// Allocate from current chunk, return reference

// When Arena is dropped, all allocations are freed at once

Advantages: Very fast allocation (bump pointer). No individual frees. No fragmentation. Disadvantages: Can't free individual objects. Memory not reclaimed until arena is dropped.

Used in: Compilers (AST nodes, types), game engines (per-frame allocations), parsers, web request handlers.

Rust crate: bumpalo, typed-arena.

Region-Based Management

Generalization of arenas. Associate objects with regions (lexical scopes). All objects in a region are freed when the region ends.

Cyclone, MLKit: Languages with region-based memory management. Tomasulo-like inference determines region lifetimes.

Escape Analysis

Compiler optimization: determine if an object escapes the function that creates it.

- Doesn't escape: Allocate on the stack instead of the heap (much faster).

- Escapes: Must allocate on the heap.

JVM: HotSpot JIT performs escape analysis. Non-escaping objects are stack-allocated or eliminated entirely (scalar replacement).

Go: The compiler decides stack vs heap allocation based on escape analysis.

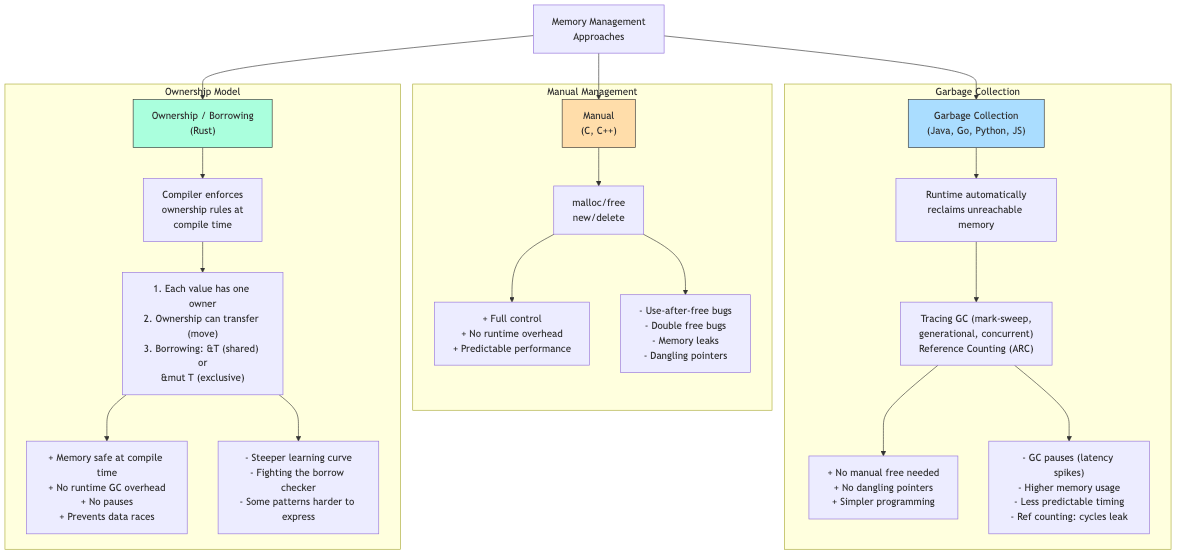

GC vs Manual vs Ownership

| Aspect | Manual (C) | GC (Java/Go) | Ownership (Rust) |

|---|---|---|---|

| Memory bugs | Common | Impossible (for memory) | Impossible (safe Rust) |

| Performance | Best (if correct) | Good (GC pauses) | Best (no GC, no overhead) |

| Developer effort | High (manual tracking) | Low (automatic) | Medium (satisfy borrow checker) |

| Deterministic cleanup | Yes (explicit free) | No (GC decides when) | Yes (drop at scope end) |

| Latency spikes | No (no GC) | Yes (GC pauses) | No (no GC) |

| Concurrency safety | None (manual) | Partial (GC helps) | Full (ownership + Send/Sync) |

Applications in CS

- Systems programming: Manual or ownership-based. OS kernels, databases, game engines need deterministic performance.

- Application programming: GC is standard. Web servers, business logic, scripting.

- Real-time systems: GC pauses are unacceptable. Manual or ownership-based.

- Embedded systems: No heap allocation (stack only) or manual with arenas. Very constrained memory.

- Web browsers: GC for JavaScript. Manual/arena for rendering engine (Servo uses Rust's ownership).

- Database engines: Custom allocators, arena allocation for query execution, buffer pool management.