Speech Synthesis (TTS)

Overview

Text-to-Speech (TTS) converts written text into natural-sounding speech. Modern neural TTS systems have achieved near-human quality for many languages and speaking styles.

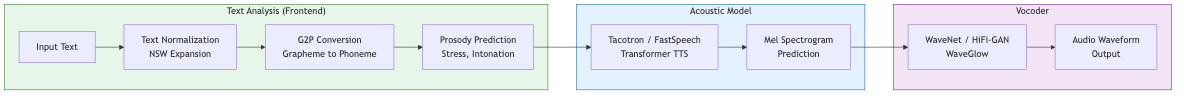

Text -> Text Analysis -> Acoustic Model -> Vocoder -> Waveform

Text Analysis (Front-End)

Text Normalization

Convert non-standard words (NSW) to spoken-form text:

| Input | Output |

|---|---|

| $3.50 | three dollars and fifty cents |

| Dr. Smith | doctor smith |

| 01/15/2025 | january fifteenth twenty twenty-five |

| 2nd | second |

| IEEE | I triple E |

| St. | street / saint (context-dependent) |

Requires disambiguation: "read" (past vs present), "1/2" (one half vs January second). Rule-based systems combined with neural classifiers handle ambiguous cases.

Grapheme-to-Phoneme (G2P)

Convert text to phonemic representation:

- Pronunciation dictionaries: CMUdict (English, ~134k words), lexicon lookup

- Rule-based: Letter-to-sound rules (effective for regular orthographies like Spanish)

- Sequence-to-sequence models: Neural G2P for out-of-vocabulary words

- Heteronyms: Words spelled the same but pronounced differently ("live", "bass", "bow")

Output typically uses IPA or ARPABET phoneme sets. Some modern end-to-end models skip explicit G2P and operate on characters or graphemes directly.

Prosody Prediction

Determine the rhythm, stress, and intonation of the utterance:

- Phrasing: Where to place pauses and phrase boundaries

- Stress: Which syllables receive emphasis

- Intonation: F0 contour (rising for questions, falling for statements)

- Duration: How long each phoneme/word should last

- ToBI (Tones and Break Indices): Standard prosodic annotation framework

Prosody carries meaning beyond words: "You did THAT?" vs "YOU did that?" Neural models increasingly learn prosody implicitly from data rather than explicit prediction.

Concatenative Synthesis

The dominant approach before neural TTS (~pre-2016):

- Record a large speech corpus from a single speaker (10-40 hours)

- Segment into units (diphones, half-phones, or variable-length units)

- At synthesis time, select optimal unit sequence minimizing:

- Target cost: How well each unit matches desired linguistic features

- Join cost: How smooth the concatenation boundaries sound

Unit selection synthesis searches the full database for the best sequence. Quality is high when good units exist but degrades for out-of-domain text. Requires massive storage and is limited to the recorded speaker.

Statistical Parametric Synthesis

Generate speech parameters (F0, spectral envelope, aperiodicity) from linguistic features using statistical models, then synthesize waveform with a vocoder:

- HMM-based (HTS): Model speech parameters with HMMs, generate from statistics

- DNN-based: Replace HMM with neural networks mapping linguistic features to acoustics

- More flexible than concatenative (voice adaptation, style control)

- Historically suffered from "buzzy" vocoder quality (STRAIGHT, WORLD vocoders)

Neural TTS

Tacotron / Tacotron 2

Attention-based sequence-to-sequence model (Google, 2017-2018):

Characters/Phonemes -> Encoder -> Attention -> Decoder -> Mel-spectrogram -> Vocoder

- Encoder: Convolution layers + BiLSTM process input text

- Location-sensitive attention: Encourages monotonic left-to-right alignment

- Decoder: Autoregressive LSTM predicts mel frames with stop token

- Post-net: Residual convolution layers refine mel-spectrogram

Produces high-quality mel-spectrograms but is slow (autoregressive frame-by-frame generation) and can suffer from attention failures (skipping, repeating, or failing to align).

FastSpeech / FastSpeech 2

Non-autoregressive (parallel) TTS for fast inference:

- Duration predictor: Predicts phoneme durations, expands encoder output

- Variance adaptors: Predict pitch, energy, and duration explicitly

- Parallel generation: All mel frames generated simultaneously

- Training: Knowledge distillation from autoregressive teacher (FastSpeech 1) or direct training with forced alignments (FastSpeech 2)

10-50x faster than autoregressive models at inference. Eliminates attention failure modes. Slight quality trade-off vs best autoregressive models.

VITS (Variational Inference with adversarial learning for TTS)

End-to-end model producing waveforms directly from text:

- Combines VAE, normalizing flows, and adversarial training

- Posterior encoder: Encodes ground-truth audio to latent space

- Prior encoder: Maps text to latent distribution via normalizing flows

- HiFi-GAN decoder: Generates waveform from latent representation

- Monotonic alignment search (MAS): Learns alignment without attention

Single-stage (no separate vocoder needed), high quality, relatively fast.

VALL-E (Microsoft)

Neural codec language model approach to TTS:

- Treats TTS as a language modeling problem over discrete audio tokens

- Audio tokenized by neural codec (EnCodec) into multiple codebook streams

- Autoregressive model: Generates first codebook conditioned on text + 3s prompt

- Non-autoregressive model: Generates remaining codebooks in parallel

- Enables zero-shot voice cloning from a 3-second audio prompt

Represents a paradigm shift: TTS as in-context learning rather than explicit modeling. Follow-ups: VALL-E X (cross-lingual), VALL-E 2, VoiceCraft, BASE TTS.

Vocoders

Convert acoustic features (mel-spectrogram) into time-domain waveforms.

WaveNet (DeepMind, 2016)

Autoregressive generative model for raw audio:

- Dilated causal convolutions: Exponentially growing receptive field

- Gated activations: tanh * sigmoid gating per layer

- Conditioning: Global (speaker) and local (mel frames) conditioning

- Produces extremely high-quality audio but very slow (sample-by-sample generation)

Parallel WaveNet uses probability density distillation for real-time synthesis.

WaveRNN

Single-layer RNN generating audio samples. Optimized for on-device with:

- Sparse weight matrices (pruning)

- Subscale prediction (generate multiple samples per step)

- Dual softmax for coarse/fine sample prediction

WaveGlow

Flow-based vocoder (NVIDIA). Parallel generation via invertible 1x1 convolutions and affine coupling layers. Real-time but large model.

HiFi-GAN

GAN-based vocoder -- current standard for quality and speed:

- Generator: Transposed convolutions upsample mel to waveform

- Multi-period discriminator (MPD): Evaluates periodic patterns at different periods

- Multi-scale discriminator (MSD): Evaluates audio at multiple resolutions

- Loss: Adversarial + feature matching + mel-spectrogram reconstruction

Fast (real-time on CPU), high quality, small model. Multiple configurations (V1/V2/V3) trade off quality vs speed.

Other Notable Vocoders

- BigVGAN: Large-scale GAN vocoder with anti-aliased activations, state-of-art quality

- Vocos: Fourier-based vocoder, predicts STFT magnitudes and phases directly

- EnCodec/SoundStream: Neural audio codecs doubling as vocoders for codec-based TTS

Voice Cloning

Replicate a target speaker's voice characteristics:

Speaker Adaptation

Fine-tune a multi-speaker TTS model on target speaker data (minutes to hours):

- Freeze most parameters, adapt speaker embedding or few layers

- Higher quality but requires training

Zero-Shot Cloning

Synthesize in a new voice from a short reference clip without fine-tuning:

- Extract speaker embedding from reference audio (speaker encoder)

- Condition TTS model on this embedding

- VALL-E approach: In-context learning from 3-second prompt

- Quality improving rapidly but still behind adaptation approaches

Ethical Considerations

Voice cloning raises serious concerns around consent, deepfakes, and fraud. Mitigations include watermarking, speaker verification, and synthetic speech detection systems.

Expressive and Controllable TTS

Style Tokens (GST)

Learn a bank of "style tokens" from data. At inference, select or interpolate tokens to control speaking style (happy, sad, whispered) without explicit labels.

Reference Encoder

Extract prosodic style from a reference audio clip and transfer it to synthesized speech.

Explicit Control

FastSpeech 2 variance adaptors allow direct manipulation of:

- Pitch contour (raise/lower F0)

- Energy/volume profile

- Speaking rate (duration scaling)

Emotional and Expressive TTS

- Multi-style training with emotion labels

- Prompt-based control in large TTS models (natural language style descriptions)

- Hierarchical prosody modeling (phone, word, utterance level)

Modern systems like Bark, StyleTTS 2, and large-scale models increasingly support fine-grained emotional and stylistic control through natural language prompts or learned latent spaces.