Asymptotic Notation

Asymptotic notation describes the growth rate of functions as the input size approaches infinity, abstracting away constant factors and lower-order terms.

Big-O (O) — Upper Bound

f(n) = O(g(n)) if there exist constants c > 0 and n₀ such that f(n) ≤ c·g(n) for all n ≥ n₀.

Meaning: f grows no faster than g (up to a constant factor). An upper bound on growth.

3n² + 5n + 7 = O(n²)

because 3n² + 5n + 7 ≤ 4n² for all n ≥ 8 (c=4, n₀=8)

Common mistake: O(n²) does NOT mean the algorithm IS Θ(n²). O(n²) includes O(n), O(log n), O(1), etc. It's just an upper bound.

Big-Omega (Ω) — Lower Bound

f(n) = Ω(g(n)) if there exist constants c > 0 and n₀ such that f(n) ≥ c·g(n) for all n ≥ n₀.

Meaning: f grows at least as fast as g. A lower bound on growth.

3n² + 5n + 7 = Ω(n²)

because 3n² + 5n + 7 ≥ 3n² ≥ 3·n² for all n ≥ 1

Big-Theta (Θ) — Tight Bound

f(n) = Θ(g(n)) if f(n) = O(g(n)) AND f(n) = Ω(g(n)).

Meaning: f grows at the same rate as g. A tight bound.

3n² + 5n + 7 = Θ(n²)

because 3n² ≤ 3n² + 5n + 7 ≤ 4n² for large n

Θ is the most informative: It tells you both the upper and lower bound.

Little-o (o) — Strict Upper Bound

f(n) = o(g(n)) if lim_{n→∞} f(n)/g(n) = 0.

Meaning: f grows strictly slower than g. NOT just a constant factor away.

n = o(n²) because n/n² = 1/n → 0

n² ≠ o(n²) because n²/n² = 1 → 1 (not 0)

n log n = o(n²) because n log n / n² = log n / n → 0

Little-omega (ω) — Strict Lower Bound

f(n) = ω(g(n)) if lim_{n→∞} f(n)/g(n) = ∞.

Meaning: f grows strictly faster than g.

n² = ω(n) because n²/n = n → ∞

n² ≠ ω(n²) because n²/n² = 1 (not ∞)

Properties

Transitivity

If f = O(g) and g = O(h), then f = O(h). (Same for Ω, Θ, o, ω.)

Reflexivity

f = O(f), f = Ω(f), f = Θ(f). (NOT for o, ω — strict.)

Symmetry

f = Θ(g) iff g = Θ(f).

Transpose Symmetry

f = O(g) iff g = Ω(f). f = o(g) iff g = ω(f).

Sum Rule

O(f) + O(g) = O(max(f, g)). The dominant term wins.

O(n²) + O(n log n) = O(n²)

O(n) + O(n) = O(n)

Product Rule

O(f) × O(g) = O(f × g).

O(n) × O(log n) = O(n log n)

Common Growth Rates

| Notation | Name | Example |

|---|---|---|

| O(1) | Constant | Hash table lookup, array access |

| O(log n) | Logarithmic | Binary search, balanced BST lookup |

| O(√n) | Square root | Trial division primality test |

| O(n) | Linear | Linear search, single array pass |

| O(n log n) | Linearithmic | Merge sort, FFT |

| O(n²) | Quadratic | Bubble sort, nested loops |

| O(n³) | Cubic | Matrix multiplication, Floyd-Warshall |

| O(n^k) | Polynomial | k nested loops |

| O(2^n) | Exponential | Subset enumeration, brute-force TSP |

| O(n!) | Factorial | Permutation enumeration |

Relative Growth

1 < log n < √n < n < n log n < n² < n³ < 2^n < n! < n^n

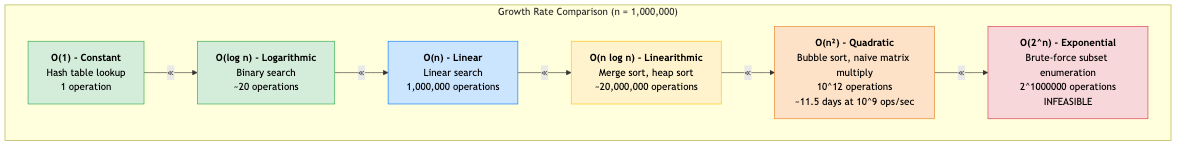

For n = 1,000,000:

| Growth | Operations | Time at 10⁹ ops/sec |

|---|---|---|

| O(1) | 1 | 1 ns |

| O(log n) | 20 | 20 ns |

| O(n) | 10⁶ | 1 ms |

| O(n log n) | 2 × 10⁷ | 20 ms |

| O(n²) | 10¹² | 17 minutes |

| O(n³) | 10¹⁸ | 32 years |

| O(2^n) | 10³⁰¹⁰²⁹ | heat death of universe × 10^(many) |

Limit-Based Definitions

Using limits (easier for complex functions):

f = O(g) ⟺ lim sup_{n→∞} f(n)/g(n) < ∞

f = Ω(g) ⟺ lim inf_{n→∞} f(n)/g(n) > 0

f = Θ(g) ⟺ 0 < lim_{n→∞} f(n)/g(n) < ∞ (if limit exists)

f = o(g) ⟺ lim_{n→∞} f(n)/g(n) = 0

f = ω(g) ⟺ lim_{n→∞} f(n)/g(n) = ∞

L'Hôpital's rule is often useful for computing these limits.

Logarithmic Properties

In asymptotic analysis, the base of logarithms doesn't matter:

log_a(n) = log_b(n) / log_b(a) = Θ(log_b(n))

All logarithmic bases differ by a constant factor → same asymptotic class.

By convention, log n in CS means log₂ n (unless stated otherwise).

Useful identities:

log(ab) = log a + log b

log(a^k) = k log a

log(n!) = Θ(n log n) (Stirling's approximation)

log(C(n,k)) = Θ(k log(n/k)) (for k ≤ n/2)

Polynomial Hierarchy

For polynomial functions f(n) = aₖn^k + ... + a₁n + a₀:

f(n) = Θ(n^k) (the leading term dominates)

All lower-order terms are absorbed. The leading coefficient is absorbed by Θ.

Common Pitfalls

-

O is an upper bound, not a tight bound: Saying "this algorithm is O(n²)" when it's actually Θ(n) is technically correct but misleading. Use Θ when you know both bounds.

-

Dropping important terms too early: O(n² + n) = O(n²) is correct, but if the constant on n is much larger than on n², the n term may dominate for practical input sizes.

-

Ignoring constants: O(10⁹ × n) = O(n), but 10⁹n operations may be impractical even for small n. Constants matter in practice.

-

Base of exponent DOES matter: O(2^n) ≠ O(3^n). Unlike logarithms, exponential bases are NOT equivalent.

-

Amortized ≠ Average: Amortized O(1) is a worst-case guarantee over a sequence. Average O(1) is expected over random inputs. Different concepts.

Applications in CS

- Algorithm comparison: Choose between algorithms based on asymptotic complexity for the expected input size.

- Scalability analysis: Will this algorithm work when input grows 10×? 100×? 1000×?

- Interview preparation: Analyzing and stating complexity is a core interview skill.

- System design: Choose data structures and algorithms based on growth requirements.

- Complexity theory: P, NP, EXPTIME — all defined in terms of asymptotic resource bounds.