Complexity Classes

This file provides a more detailed treatment of complexity classes beyond the introduction in the automata topic, covering probabilistic and counting classes.

Deterministic Classes

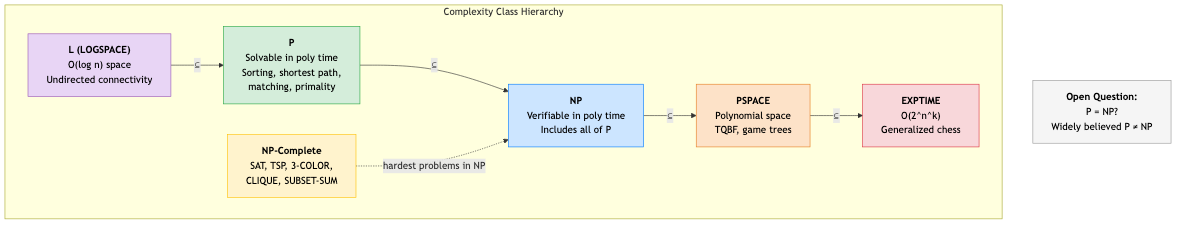

P

P = ⋃ₖ TIME(nᵏ)

Decidable in polynomial time. "Efficiently solvable."

EXPTIME

EXPTIME = ⋃ₖ TIME(2^(nᵏ))

P ⊊ EXPTIME (provable by the time hierarchy theorem).

PSPACE

PSPACE = ⋃ₖ SPACE(nᵏ)

Problems solvable using polynomial space (but possibly exponential time).

PSPACE = NPSPACE (Savitch's theorem: nondeterministic space S can be simulated in deterministic space O(S²)).

P ⊆ NP ⊆ PSPACE ⊆ EXPTIME.

PSPACE-complete: TQBF, generalized games (chess, Go on n×n board), some planning problems.

L and NL

L = SPACE(log n)

NL = NSPACE(log n)

L-complete: Undirected graph connectivity (reachability).

NL-complete: Directed s-t connectivity (PATH). NL = coNL (Immerman-Szelepcsenyi).

Nondeterministic Classes

NP and coNP

NP: Languages with polynomial-time verifiers (short certificates for YES instances).

coNP: Languages with polynomial-time refuters (short certificates for NO instances).

NP ∩ coNP: Problems with short certificates for both YES and NO. Includes P.

Examples in NP ∩ coNP (but not known to be in P):

- Integer factoring: YES certificate = factors. NO certificate = primality proof.

- Linear programming: Both feasible solutions and infeasibility certificates are short. (Actually in P — Khachiyan, 1979.)

Probabilistic Classes

BPP (Bounded-Error Probabilistic Polynomial)

BPP = {L : ∃ probabilistic poly-time TM M,

x ∈ L → P(M accepts x) ≥ 2/3

x ∉ L → P(M rejects x) ≥ 2/3}

Two-sided error: Can make mistakes in both directions (YES and NO), but with bounded probability.

Error reduction: Run M multiple times, take majority vote. Error probability drops exponentially: k runs → error ≤ 2^(-Ω(k)).

Conjectured: BPP = P (randomness doesn't help for decision problems, assuming strong enough pseudorandom generators exist). Evidence: many BPP algorithms have been derandomized.

Examples in BPP: Polynomial identity testing (randomized), primality testing (Miller-Rabin — now also in P via AKS, but BPP algorithm was known first).

RP (Randomized Polynomial)

RP = {L : ∃ probabilistic poly-time TM M,

x ∈ L → P(M accepts x) ≥ 1/2

x ∉ L → P(M rejects x) = 1}

One-sided error: If M says YES, x might not be in L (false positive). If M says NO, x is definitely not in L (no false negatives).

coRP: Symmetric — no false positives, possible false negatives.

RP ⊆ NP ∩ BPP and coRP ⊆ coNP ∩ BPP.

ZPP (Zero-Error Probabilistic Polynomial)

ZPP = RP ∩ coRP

Always gives the correct answer. Expected polynomial time, but might run longer.

Alternatively: Las Vegas algorithms — always correct, expected poly-time.

Counting Classes

#P

The class of counting problems associated with NP decision problems.

#P: Count the number of accepting paths of a nondeterministic polynomial-time TM.

Examples:

- #SAT: How many satisfying assignments does a formula have?

- #MATCHING: How many perfect matchings does a graph have?

- Permanent of a 0/1 matrix: #P-complete (Valiant).

#P is hard: Even for problems where the decision version is in P. Counting perfect matchings in bipartite graphs is #P-complete, but deciding if one exists is in P!

Polynomial Hierarchy (PH)

Generalization of NP/coNP to multiple levels of quantifier alternation.

Σ₀ᴾ = Π₀ᴾ = P

Σ₁ᴾ = NP, Π₁ᴾ = coNP

Σ₂ᴾ = NP^NP (NP with NP oracle)

Π₂ᴾ = coNP^NP

Σₖᴾ = NP^(Σ_{k-1}^P)

PH = ⋃ₖ Σₖᴾ

PH ⊆ PSPACE. If PH collapses (some Σₖ = Σₖ₊₁), it's considered "unlikely" (analogous to P = NP).

Σ₂ᴾ-complete example: Given a Boolean circuit, does there exist an input for which the output is 1 for ALL possible values of a second set of inputs?

∃x ∀y: φ(x, y) = 1 (Σ₂ᴾ)

∀x ∃y: φ(x, y) = 1 (Π₂ᴾ)

Oracle Machines

A TM with access to an oracle — a black box that instantly answers membership queries for a language.

P^A: P with oracle for A. Can query "is x ∈ A?" in one step.

NP^SAT = Σ₂ᴾ: NP with a SAT oracle.

Relativization

Many proof techniques fail to separate P from NP because they relativize — they work the same way with any oracle.

Baker-Gill-Solovay (1975): There exists an oracle A where P^A = NP^A, and an oracle B where P^B ≠ NP^B. Therefore, any proof separating P from NP must use non-relativizing techniques.

This eliminates simple diagonalization and simulation as proof strategies for P vs NP.

Algebrization (Aaronson-Wigderson, 2009)

A stronger barrier than relativization. Even techniques that use algebraic extensions (like IP = PSPACE proof) are blocked from resolving P vs NP.

Natural Proofs (Razborov-Rudich, 1997)

If one-way functions exist (a standard cryptographic assumption), then "natural" proof strategies cannot prove superpolynomial circuit lower bounds (and thus can't separate P from NP via circuit complexity).

These three barriers (relativization, algebrization, natural proofs) explain why P vs NP is so hard to resolve.

Containment Summary

L ⊆ NL ⊆ P ⊆ RP ⊆ BPP

⊆ NP ⊆ PH ⊆ PSPACE = NPSPACE ⊆ EXPTIME

P ⊆ coRP ⊆ BPP

⊆ coNP ⊆ PH

ZPP = RP ∩ coRP

Known strict: L ⊊ PSPACE, P ⊊ EXPTIME, NL ⊊ PSPACE

Conjectured: P ⊊ NP ⊊ PSPACE ⊊ EXPTIME, BPP = P

Applications in CS

- Cryptography: Security assumptions (one-way functions exist iff P ≠ NP, roughly speaking). Factoring, discrete log, lattice problems — their assumed hardness powers modern cryptography.

- Algorithm design: Knowing a problem is NP-hard tells you to seek approximation, heuristics, or parameterized algorithms.

- Randomized algorithms: BPP/RP tell us when randomness helps. ZPP tells us when we can get the best of both worlds.

- Counting problems: #P-hardness arises in statistical physics, Bayesian inference, and network reliability.

- Theory: Understanding the barrier results helps focus research on approaches that might actually work for P vs NP.