Recurrence Relations

Recurrence relations express the running time of recursive algorithms. Solving them gives closed-form complexity bounds.

Methods for Solving Recurrences

Substitution Method

Guess the solution, then prove it correct by induction.

Example: T(n) = 2T(n/2) + n. Guess T(n) = O(n log n).

Prove: Assume T(k) ≤ ck log k for all k < n.

T(n) = 2T(n/2) + n

≤ 2·c(n/2)log(n/2) + n

= cn(log n - 1) + n

= cn log n - cn + n

≤ cn log n (for c ≥ 1)

So T(n) ≤ cn log n = O(n log n). ✓

Tips: If the guess is wrong, the induction will fail. Adjust the guess. Sometimes need to subtract lower-order terms for the proof to work (e.g., prove T(n) ≤ cn log n - dn).

Recursion Tree Method

Draw the recursion tree, sum the work at each level.

Example: T(n) = 2T(n/2) + n.

Level 0: n work = n

Level 1: n/2 n/2 work = n

Level 2: n/4 n/4 n/4 n/4 work = n

...

Level k: 2^k nodes, each n/2^k work = n

...

Level log n: n nodes, each O(1) work = n

Total levels: log n + 1

Total work: n × (log n + 1) = O(n log n)

Example: T(n) = 3T(n/4) + n².

Level 0: n² work = n²

Level 1: 3 × (n/4)² work = 3n²/16

Level 2: 9 × (n/16)² work = 9n²/256 = (3/16)² × n²

...

Level k: 3^k × (n/4^k)² work = (3/16)^k × n²

Geometric series: n² × Σ (3/16)^k = n² × O(1) = O(n²)

The sum is dominated by the root (ratio 3/16 < 1), so T(n) = Θ(n²).

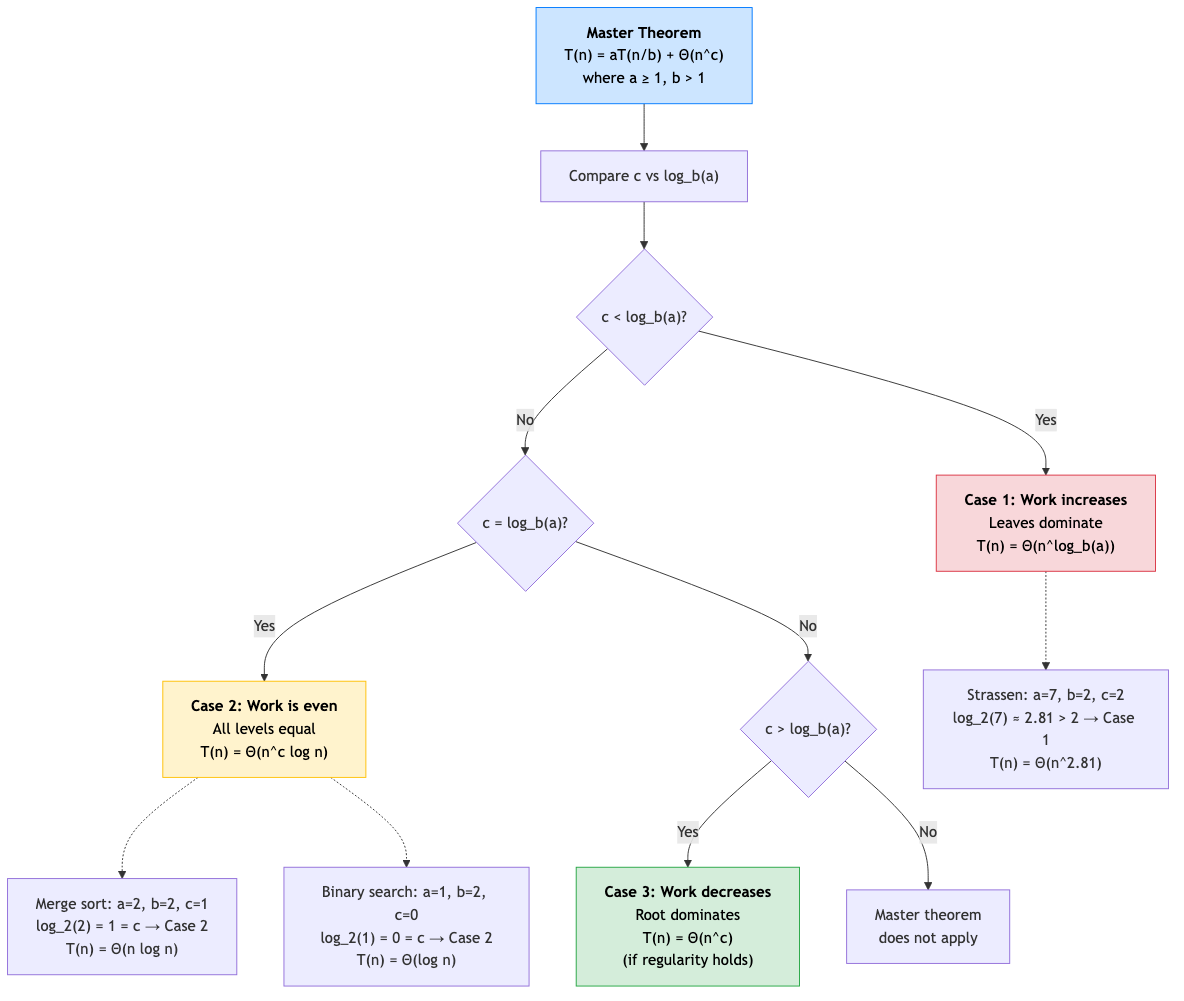

Master Theorem

For recurrences T(n) = aT(n/b) + f(n) with a ≥ 1, b > 1:

Let c_crit = log_b(a).

Case 1: If f(n) = O(n^(c_crit - ε)) for some ε > 0:

T(n) = Θ(n^c_crit)

Work dominated by the leaves. Recursive calls dominate.

Case 2: If f(n) = Θ(n^c_crit × log^k n) for some k ≥ 0:

T(n) = Θ(n^c_crit × log^(k+1) n)

Work is evenly distributed across levels.

Case 3: If f(n) = Ω(n^(c_crit + ε)) for some ε > 0, and af(n/b) ≤ δf(n) for some δ < 1 (regularity condition):

T(n) = Θ(f(n))

Work dominated by the root. Combine step dominates.

Master Theorem Examples

| Recurrence | a | b | c_crit | f(n) | Case | Result |

|---|---|---|---|---|---|---|

| T(n) = 2T(n/2) + n | 2 | 2 | 1 | n = Θ(n¹) | 2 (k=0) | Θ(n log n) |

| T(n) = 2T(n/2) + 1 | 2 | 2 | 1 | 1 = O(n^0) | 1 | Θ(n) |

| T(n) = 2T(n/2) + n² | 2 | 2 | 1 | n² = Ω(n^(1+1)) | 3 | Θ(n²) |

| T(n) = 4T(n/2) + n | 4 | 2 | 2 | n = O(n^(2-1)) | 1 | Θ(n²) |

| T(n) = 7T(n/2) + n² | 7 | 2 | 2.807 | n² = O(n^(2.807-0.8)) | 1 | Θ(n^2.807) |

| T(n) = 2T(n/2) + n log n | 2 | 2 | 1 | n log n = Θ(n¹ log¹ n) | 2 (k=1) | Θ(n log² n) |

| T(n) = T(n/2) + 1 | 1 | 2 | 0 | 1 = Θ(n⁰) | 2 (k=0) | Θ(log n) |

| T(n) = T(n/2) + n | 1 | 2 | 0 | n = Ω(n^(0+1)) | 3 | Θ(n) |

Master Theorem Gaps

The master theorem doesn't cover all cases:

- f(n) = n log n with a = 2, b = 2: f = Θ(n log n) → Case 2 with k=1.

- f(n) = n / log n: Falls between cases 1 and 2. Not covered.

- a depends on n (not constant): Not covered.

- T(n) = T(n/3) + T(2n/3) + n: Different subproblem sizes. Not covered.

For these, use Akra-Bazzi or recursion tree.

Akra-Bazzi Method

Handles recurrences with different subproblem sizes:

T(n) = Σᵢ aᵢ T(n/bᵢ) + f(n)

Step 1: Find p such that Σᵢ aᵢ/bᵢ^p = 1.

Step 2: T(n) = Θ(n^p × (1 + ∫₁ⁿ f(u)/u^(p+1) du)).

Example: T(n) = T(n/3) + T(2n/3) + n.

Step 1: Solve 1/3^p + (2/3)^p = 1. Solution: p = 1.

Step 2: T(n) = Θ(n × (1 + ∫₁ⁿ u/u² du)) = Θ(n × (1 + ln n)) = Θ(n log n).

Change of Variables

Transform the recurrence into a standard form.

Example: T(n) = 2T(√n) + log n.

Let m = log n (so n = 2^m and √n = 2^(m/2)).

S(m) = T(2^m) = 2T(2^(m/2)) + m = 2S(m/2) + m.

By master theorem: S(m) = Θ(m log m).

Back-substitute: T(n) = Θ(log n × log log n).

Generating Functions (Advanced)

Solve recurrences using generating functions — multiply by x^n, sum over n, solve the resulting equation.

Example: Fibonacci: F(n) = F(n-1) + F(n-2), F(0) = 0, F(1) = 1.

Generating function: F(x) = x/(1 - x - x²).

Partial fractions → closed form: F(n) = (φⁿ - ψⁿ)/√5 where φ = (1+√5)/2.

Common Algorithm Recurrences

| Algorithm | Recurrence | Solution |

|---|---|---|

| Binary search | T(n) = T(n/2) + O(1) | O(log n) |

| Merge sort | T(n) = 2T(n/2) + O(n) | O(n log n) |

| Quicksort (best/avg) | T(n) = 2T(n/2) + O(n) | O(n log n) |

| Quicksort (worst) | T(n) = T(n-1) + O(n) | O(n²) |

| Strassen | T(n) = 7T(n/2) + O(n²) | O(n^2.807) |

| Karatsuba | T(n) = 3T(n/2) + O(n) | O(n^1.585) |

| Tower of Hanoi | T(n) = 2T(n-1) + 1 | O(2ⁿ) |

| Selection (worst) | T(n) = T(n/5) + T(7n/10) + O(n) | O(n) |

| FFT | T(n) = 2T(n/2) + O(n) | O(n log n) |

Applications in CS

- Algorithm design: Recurrences arise naturally from divide-and-conquer. Solving them determines the algorithm's complexity.

- Data structures: Balanced BST operations have recurrence T(n) = T(n/2) + O(1) → O(log n).

- Parallel algorithms: Work and span recurrences determine parallel efficiency.

- Competitive programming: Quick recurrence analysis (usually via master theorem) is essential for choosing algorithms.