AI Foundations

History of AI

| Era | Key Developments |

|---|---|

| 1950s | Turing Test (1950). Dartmouth Conference (1956) — "AI" coined. Early optimism. |

| 1960-70s | ELIZA, SHRDLU, expert systems. First AI winter (late 1970s). |

| 1980s | Expert systems boom. Backpropagation rediscovered (1986). Second AI winter (late 1980s). |

| 1990s | Statistical methods rise. IBM Deep Blue beats Kasparov (1997). |

| 2000s | Machine learning dominance. SVMs, random forests, Bayesian methods. |

| 2010s | Deep learning revolution. ImageNet (2012), AlphaGo (2016), GPT/BERT (2018-19). |

| 2020s | Large language models (GPT-3/4, Claude), diffusion models, foundation models. AI becomes mainstream. |

Turing Test

Imitation game (Turing, 1950): A human evaluator converses with a machine and a human (text-only). If the evaluator can't reliably distinguish the machine from the human, the machine passes.

Limitations: Tests conversation, not intelligence. A machine can pass without genuine understanding. Modern LLMs approach passing it, yet the test remains debated.

Intelligent Agents

An agent perceives its environment through sensors and acts upon it through actuators.

Agent: [Sensors] → Perception → Decision → Action → [Actuators]

↕

[Environment]

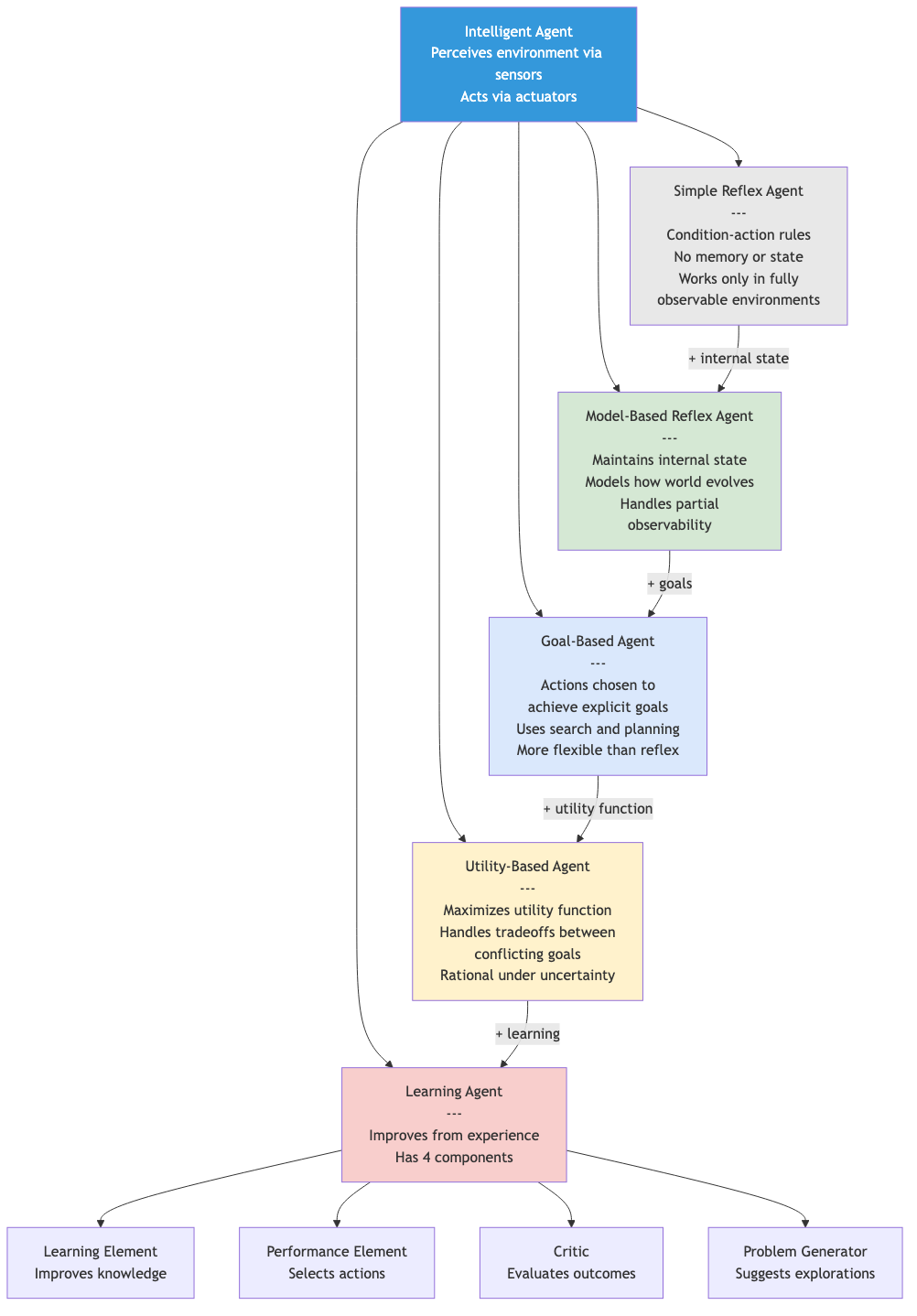

Agent Types

Simple reflex: Condition-action rules. No memory. "If sensor reads X, do Y."

Model-based reflex: Maintains internal state (model of the world). Handles partially observable environments.

Goal-based: Actions chosen to achieve goals. Can plan ahead. Uses search/planning algorithms.

Utility-based: Maximizes a utility function. Handles tradeoffs between conflicting goals. Rational decision-making under uncertainty.

Learning agent: Improves performance through experience. Has: learning element (improves), performance element (acts), critic (evaluates), problem generator (explores).

Environment Types

| Property | Options | Example |

|---|---|---|

| Observable | Fully / Partially | Chess (full) vs poker (partial) |

| Deterministic | Deterministic / Stochastic | Chess vs backgammon |

| Episodic | Episodic / Sequential | Image classification vs chess |

| Static | Static / Dynamic | Crossword vs driving |

| Discrete | Discrete / Continuous | Chess vs robotics |

| Agents | Single / Multi | Puzzle vs auction |

Rationality

A rational agent selects actions that maximize its expected performance measure, given its percept sequence and built-in knowledge.

Rationality ≠ omniscience (can't know everything). Rationality ≠ perfection (best action given available information). Rational agents explore and learn to improve future performance.

Problem-Solving Agents

Formulate goals → formulate problems → search for solutions → execute.

Problem definition: Initial state, actions, transition model, goal test, path cost.

Solution: Sequence of actions from initial state to a goal state.

This is the foundation for the search algorithms covered in subsequent files.

Applications in CS

- Autonomous systems: Self-driving cars (perception → planning → control), drones, robots.

- Natural language: Translation, summarization, question answering, chatbots, code generation.

- Computer vision: Object detection, facial recognition, medical imaging, autonomous driving.

- Game AI: Game-playing agents (chess, Go, poker, StarCraft), procedural content generation.

- Decision support: Recommendation systems, fraud detection, medical diagnosis, legal analysis.