Compiler Overview

A compiler translates source code in one language into equivalent code in another language (typically machine code). Understanding compilers deepens understanding of programming languages, performance, and system design.

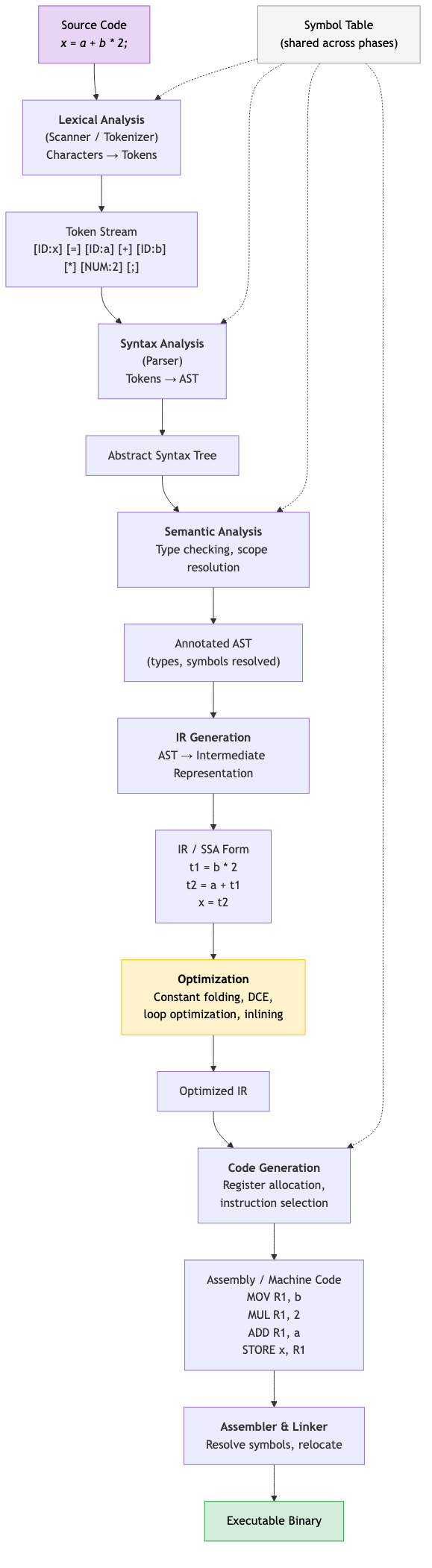

Compiler Phases

Source Code

↓

[Lexical Analysis] → Token stream

↓

[Syntax Analysis] → Parse tree / AST

↓

[Semantic Analysis] → Annotated AST (types, scopes resolved)

↓

[Intermediate Code Gen] → IR (e.g., SSA, three-address code)

↓

[Optimization] → Optimized IR

↓

[Code Generation] → Target code (assembly / machine code)

↓

[Assembly / Linking] → Executable

Front End

Language-dependent. Handles syntax and semantics of the source language.

- Lexical analysis: Characters → tokens

- Syntax analysis: Tokens → parse tree / AST

- Semantic analysis: Type checking, name resolution, scope analysis

Middle End (Optimizer)

Language-independent, target-independent. Transforms IR to improve performance.

- Constant folding, dead code elimination, loop optimization, inlining

Back End

Target-dependent. Generates code for a specific architecture.

- Instruction selection, register allocation, instruction scheduling

Compiler vs Interpreter

| Aspect | Compiler | Interpreter |

|---|---|---|

| Output | Executable binary | No output (executes directly) |

| Execution speed | Fast (native code) | Slower (interpretation overhead) |

| Startup time | Slow (compilation phase) | Fast (start immediately) |

| Error detection | All errors before execution | Errors found during execution |

| Development cycle | Edit → compile → run | Edit → run |

| Examples | GCC, Clang, rustc | CPython, Ruby MRI |

Hybrid approaches combine both:

JIT Compilation (Just-In-Time)

Compile during execution. Start interpreting, then compile hot code to native.

Source → Bytecode (AOT) → Interpreter → [Hot method detected] → JIT Compiler → Native Code

Examples: JVM (HotSpot), V8 (JavaScript), .NET CLR, PyPy, LuaJIT.

Tiered compilation (JVM): Interpreter → C1 (quick JIT, basic optimizations) → C2 (slow JIT, aggressive optimizations). Only hot methods get the expensive compilation.

AOT Compilation (Ahead-Of-Time)

Compile before execution. Standard for compiled languages.

AOT for JIT languages: GraalVM Native Image (Java → native binary), Dart (Flutter uses AOT for mobile).

Benefits of AOT: Predictable startup time, no warm-up, smaller memory footprint, easier deployment.

Transpiler (Source-to-Source Compiler)

Translate from one high-level language to another.

Examples: TypeScript → JavaScript, Babel (modern JS → older JS), CoffeeScript → JavaScript, Haxe → multiple targets.

Cross-Compiler

Compile on one platform (host) for a different platform (target).

Examples: Compile ARM code on x86 (embedded development), Rust cross-compilation (cargo build --target aarch64-unknown-linux-gnu).

Bootstrapping

A compiler written in its own language.

Chicken-and-egg: How do you compile the first version?

- Write a minimal compiler in an existing language (C, assembly).

- Use it to compile the real compiler (written in the new language).

- Now the compiler can compile itself.

- Repeat to verify: compile the compiler with itself → the output should be identical.

Examples: rustc (Rust compiler written in Rust), GCC (C compiler written in C), Go compiler (written in Go since 1.5).

Self-Hosting

A compiler that can compile its own source code. A milestone in language maturity.

Verification: Compile the compiler with itself twice. Both outputs should be identical (reproducible builds). This is called a triple test.

Compiler Infrastructure

LLVM

LLVM (Low Level Virtual Machine): A modular compiler framework.

Front ends: LLVM IR: Back ends:

Clang (C/C++) ──→ ──→ x86-64

rustc (Rust) ──→ LLVM IR ──→ ARM/AArch64

Swift ──→ (common IR) ──→ RISC-V

Flang (Fortran)──→ ──→ WebAssembly

──→ NVPTX (GPU)

Benefits: Write a new language front end → get all LLVM optimizations and back ends for free. Write a new back end → all LLVM front ends can target it.

LLVM IR: SSA-based typed intermediate representation. Portable across targets.

GCC

GNU Compiler Collection. Older than LLVM. Supports more targets. Uses GIMPLE and RTL as intermediate representations.

GCC vs LLVM: GCC has broader target support and longer history. LLVM has better modularity, easier to extend, better tooling (Clang diagnostics).

Cranelift

A code generator designed for JIT compilation. Written in Rust. Used by Wasmtime (WebAssembly runtime) and experimentally by rustc.

Compilation Pipeline (Rust Example)

Source (.rs)

↓ rustc front end

HIR (High-level IR) — desugaring, name resolution

↓

MIR (Mid-level IR) — type checking, borrow checking, monomorphization

↓

LLVM IR — optimization (LLVM passes)

↓

Machine code — LLVM back end for target architecture

↓

Linking — link with libraries → executable

Key Rust-specific phases: Borrow checking happens on MIR. Monomorphization (generics → concrete types) happens before LLVM IR generation.

Applications in CS

- Language design: Understanding compilation helps design implementable, efficient languages.

- Performance understanding: Knowing what the compiler does helps write faster code and understand performance characteristics.

- Tooling: IDEs, linters, formatters, language servers all use compiler front-end technology.

- Domain-specific languages: Building compilers for custom languages (SQL, shader languages, configuration languages).

- Security: Compiler-based mitigations (stack canaries, CFI, sanitizers).