Consensus in Distributed Systems

The Consensus Problem

All correct processes must agree on a single value, even when some processes fail.

Properties:

- Agreement: All correct processes decide on the same value

- Validity: The decided value was proposed by some process

- Termination: All correct processes eventually decide

- Integrity: Each process decides at most once

P1 proposes: v1 ─┐

P2 proposes: v2 ─┼──> Consensus ──> All decide: v2

P3 proposes: v3 ─┤

P4 (crashed) ✗ ─┘

Paxos

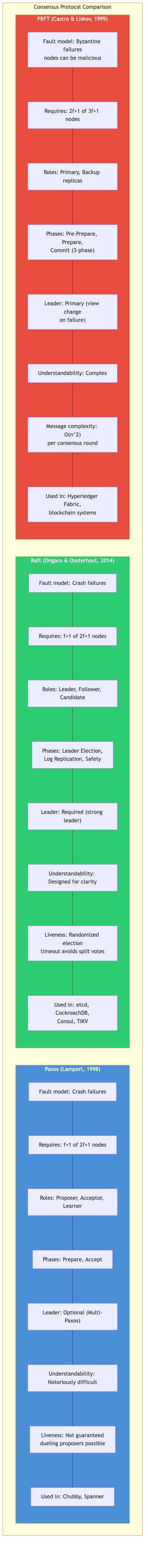

Lamport's Paxos (1998) solves consensus in a partially synchronous model with crash failures.

Basic Paxos (Single-Decree)

Three roles: Proposers, Acceptors, Learners (a node can play multiple roles).

Phase 1: PREPARE

Proposer Acceptors (majority needed)

│── Prepare(n) ───────> A1: if n > max_seen → Promise(n, prev_val)

│── Prepare(n) ───────> A2: if n > max_seen → Promise(n, prev_val)

│── Prepare(n) ───────> A3: if n > max_seen → Promise(n, prev_val)

Phase 2: ACCEPT

Proposer Acceptors

│── Accept(n, v) ─────> A1: if n ≥ max_promised → Accepted(n, v)

│── Accept(n, v) ─────> A2: if n ≥ max_promised → Accepted(n, v)

│── Accept(n, v) ─────> A3: if n ≥ max_promised → Accepted(n, v)

v = highest-numbered value from Phase 1 promises,

or proposer's own value if none

Key insight: if any acceptor already accepted a value, the proposer must use that value (preserving previous decisions).

Multi-Paxos

Optimizes repeated consensus by electing a stable leader who skips Phase 1 for subsequent slots.

Slot 1: Full Paxos (Prepare + Accept) ← establishes leader

Slot 2: Accept only (leader skip Prepare)

Slot 3: Accept only

...

Slot N: Accept only

Leader failure → new leader runs full Paxos for current slot

Paxos Challenges

- Hard to implement correctly (notoriously subtle)

- No distinguished leader in basic Paxos leads to livelock (dueling proposers)

- Multi-Paxos is not fully specified in the original paper

- Gap filling needed when slots are decided out of order

Raft

Ongaro and Ousterhout (2014) designed Raft explicitly for understandability. Equivalent power to Multi-Paxos.

Core Components

┌──────────────────────────────────────┐

│ Raft Node │

│ ┌──────────┐ ┌──────────────────┐ │

│ │ State │ │ Log │ │

│ │ Machine │ │ [1][2][3][4][5] │ │

│ └──────────┘ └──────────────────┘ │

│ Role: Leader / Follower / Candidate │

│ Term: monotonically increasing │

└──────────────────────────────────────┘

Leader Election

Follower ──(election timeout expires)──> Candidate

│ │

│ increments term

│ votes for self

│ <── RequestVote(term, log_info) ── │

│ │

│ ── VoteGranted ──> (if majority) ──> Leader

│ │

│ ── VoteRejected ──> (if lost) ──> Follower

│ │

└──(receives AppendEntries from leader)──> Follower

Election timeout: randomized (e.g., 150-300ms)

to avoid split votes

Vote granting rules:

- Candidate's term must be at least as large as voter's current term

- Candidate's log must be at least as up-to-date (last term, then length)

- Each node votes for at most one candidate per term

Log Replication

Client ──> Leader: "set x=5"

Leader log: [1:set x=1] [1:set y=2] [2:set x=5]

↑ new entry

Leader ──AppendEntries──> Follower A: appends, ACKs

Leader ──AppendEntries──> Follower B: appends, ACKs

Leader ──AppendEntries──> Follower C: (slow, no ACK yet)

Leader ──AppendEntries──> Follower D: appends, ACKs

Majority (3/5) ACKs → Leader commits entry

→ responds to client

→ next AppendEntries tells followers to commit

STRUCTURE LogEntry

term: integer

index: integer

command: bytes

STRUCTURE RaftNode

id: integer

current_term: integer

voted_for: integer or NONE

log: list of LogEntry

commit_index: integer

last_applied: integer

// Leader state

next_index: array of integer // per follower

match_index: array of integer // per follower

role: FOLLOWER | CANDIDATE | LEADER

PROCEDURE SHOULD_GRANT_VOTE(node, candidate_term,

candidate_last_log_term,

candidate_last_log_index) → boolean

IF candidate_term < node.current_term THEN

RETURN FALSE

IF node.voted_for IS NOT NONE

AND node.voted_for ≠ candidate_term THEN

RETURN FALSE // already voted this term

// Candidate's log must be at least as up-to-date

IF node.log IS NOT empty THEN

my_last_term ← LAST(node.log).term

my_last_index ← LAST(node.log).index

ELSE

my_last_term ← 0

my_last_index ← 0

RETURN (candidate_last_log_term, candidate_last_log_index)

≥ (my_last_term, my_last_index)

Membership Changes

Raft uses single-server changes (joint consensus in the original paper):

C_old C_old,new C_new

│ │ │

│── add server ──│── commit ─────│

│ │ │

Old config Transitional New config

decides both configs decides

must agree

The simpler single-server approach (add/remove one node at a time) avoids the complexity of joint consensus and is used by most implementations.

PBFT (Practical Byzantine Fault Tolerance)

Castro and Liskov (1999). Tolerates f Byzantine faults among n >= 3f + 1 nodes.

Client ──request──> Primary (leader)

│ │

│ PRE-PREPARE(v, n, m)

│ │──────────> R1, R2, R3

│ │

│ PREPARE(v, n, d, i)

│ R1 <──────────> R2 <──────────> R3

│ │

│ COMMIT(v, n, d, i)

│ R1 <──────────> R2 <──────────> R3

│ │

│ <── REPLY ────── R1, R2, R3 (client waits for f+1 matching)

Phases: PRE-PREPARE → PREPARE → COMMIT → REPLY

Communication: O(n^2) messages per consensus round

View change replaces a faulty primary, similar to leader election in Raft but more complex due to Byzantine assumptions.

Modern BFT Protocols

Tendermint

Used in Cosmos blockchain. Round-based BFT with two voting phases:

Propose → Prevote → Precommit → Commit

│ │ │

│ 2/3+ prevotes 2/3+ precommits

│ for proposal for proposal

│ │

└─── round timeout ──────┘ (move to next round)

Advantages: deterministic finality, simpler than PBFT, O(n) message complexity with threshold signatures.

HotStuff

Used in Facebook's (now Meta's) Diem/Libra. Achieves O(n) communication per round using a star topology and threshold signatures.

Basic HotStuff Pipeline:

Round k: PREPARE ──>

Round k+1: PRE-COMMIT ──> (for round k)

Round k+2: COMMIT ──> (for round k)

Round k+3: DECIDE ──> (for round k)

Chained HotStuff: pipeline all phases

Round k: GENERIC message serves as PREPARE for k,

PRE-COMMIT for k-1, COMMIT for k-2, DECIDE for k-3

Key innovation: responsive leader protocol where the leader only waits for n-f messages (not a fixed timeout).

Comparison

| Protocol | Fault Model | Nodes Needed | Msg Complexity | Latency (rounds) |

|---|---|---|---|---|

| Paxos | Crash | 2f + 1 | O(n) | 2 |

| Raft | Crash | 2f + 1 | O(n) | 2 |

| PBFT | Byzantine | 3f + 1 | O(n^2) | 3 |

| Tendermint | Byzantine | 3f + 1 | O(n) | 3 |

| HotStuff | Byzantine | 3f + 1 | O(n) | 4 (3 pipelined) |

Real-World Deployments

| System | Protocol | Use Case |

|---|---|---|

| etcd | Raft | Kubernetes cluster state |

| CockroachDB | Multi-Raft | Distributed SQL |

| Consul | Raft | Service discovery |

| Cosmos/Tendermint | Tendermint BFT | Blockchain consensus |

| Diem (discontinued) | HotStuff | Permissioned blockchain |

| ZooKeeper | Zab (Paxos variant) | Distributed coordination |

Key Takeaways

- Paxos is theoretically elegant but notoriously difficult to implement correctly. Raft provides equivalent guarantees with a clearer structure.

- Raft separates concerns (leader election, log replication, safety) making it easier to reason about and implement.

- BFT protocols handle malicious nodes but pay a cost in message complexity and latency. Modern variants (HotStuff) reduce this to O(n) with pipelining.

- All these protocols assume partial synchrony for liveness. Under full asynchrony, FLP applies and only randomized protocols can guarantee termination.