CPU Scheduling

CPU scheduling determines which process/thread runs on which CPU and for how long. Good scheduling maximizes throughput, minimizes latency, and ensures fairness.

Scheduling Criteria

| Criterion | Optimize | Definition |

|---|---|---|

| Turnaround time | Minimize | Time from submission to completion |

| Waiting time | Minimize | Time spent in the ready queue |

| Response time | Minimize | Time from submission to first response |

| Throughput | Maximize | Number of processes completed per time unit |

| CPU utilization | Maximize | Fraction of time CPU is busy |

| Fairness | Ensure | Each process gets a fair share of CPU |

These criteria often conflict: minimizing response time (favoring short jobs) may reduce throughput (context switch overhead) and starve long jobs (unfairness).

Non-Preemptive vs Preemptive

Non-preemptive (cooperative): A running process keeps the CPU until it voluntarily yields (terminates, blocks on I/O, or calls yield()). Simpler but can lead to starvation.

Preemptive: The scheduler can forcibly take the CPU from a running process (via timer interrupt). Essential for interactive systems and fairness.

Scheduling Algorithms

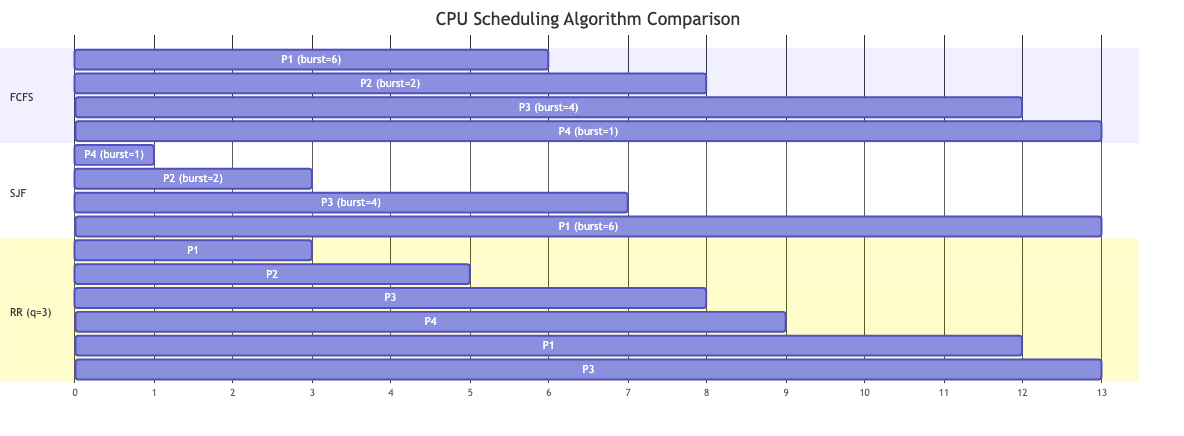

First-Come, First-Served (FCFS)

Processes run in arrival order. Non-preemptive.

Process Burst Arrival

P1 24 0

P2 3 1

P3 3 2

Gantt: |---P1(24)---|P2(3)|P3(3)|

Wait: P1=0, P2=23, P3=24

Avg wait: (0+23+24)/3 = 15.67

Problem: Convoy effect — short jobs wait behind long jobs. Poor for interactive systems.

Shortest Job First (SJF)

Run the process with the shortest burst time next. Optimal for average waiting time (provably).

Non-preemptive SJF: Once started, run to completion.

Problem: Requires knowing burst lengths in advance (impossible in general). Long jobs may starve.

Estimation: Use exponential averaging of past bursts: τₙ₊₁ = α × tₙ + (1-α) × τₙ.

Shortest Remaining Time First (SRTF)

Preemptive version of SJF. If a new job arrives with shorter remaining time than the current job, preempt.

Optimal for average waiting time among preemptive algorithms. Same starvation problem.

Priority Scheduling

Each process has a priority. Highest priority runs first.

Static priority: Set at creation. Based on user, process type, or resource needs.

Dynamic priority: Changes over time. Aging: increase priority of waiting processes to prevent starvation.

Preemptive: Higher-priority arrival preempts current process.

Problem: Starvation — low-priority processes may never run. Solution: Aging — gradually increase priority of waiting processes.

Round-Robin (RR)

Each process gets a fixed time quantum (time slice). After the quantum expires, the process is preempted and placed at the end of the ready queue.

Quantum = 4

Process Burst

P1 24

P2 3

P3 3

Gantt: |P1(4)|P2(3)|P3(3)|P1(4)|P1(4)|P1(4)|P1(4)|P1(4)|

Response time: Guaranteed ≤ (n-1) × quantum for n processes.

Quantum selection:

- Too small: Excessive context switching overhead.

- Too large: Degenerates to FCFS.

- Typical: 10-100 ms. Should be much larger than context switch time (~10 μs).

Rule of thumb: ~80% of CPU bursts should be shorter than the quantum.

Multilevel Queue

Separate ready queues for different process categories, each with its own algorithm:

┌─────────────────────┐

│ System processes │ ← Highest priority (FCFS)

├─────────────────────┤

│ Interactive │ ← Round Robin (small quantum)

├─────────────────────┤

│ Batch │ ← FCFS or SJF

├─────────────────────┤

│ Background │ ← Lowest priority (FCFS)

└─────────────────────┘

Fixed-priority between queues. Lower queues only run when higher queues are empty. Risk of starvation for lower queues.

Multilevel Feedback Queue (MLFQ)

Processes move between queues based on their behavior.

Rules:

- New processes enter the highest-priority queue.

- If a process uses its full quantum → demoted to lower queue (CPU-bound).

- If a process blocks before quantum expires → stays in current queue (I/O-bound).

- Periodically boost all processes to the top queue (prevents starvation).

Queue 0 (highest): quantum = 8ms, RR

Queue 1: quantum = 16ms, RR

Queue 2 (lowest): quantum = 32ms, FCFS

MLFQ adapts to process behavior without prior knowledge. Interactive processes stay in high-priority queues. CPU-bound processes sink to lower queues.

Used in: Most general-purpose OS schedulers are based on MLFQ ideas.

Real-Time Scheduling

Rate Monotonic (RM)

Static priority based on period: shorter period → higher priority.

Optimal among fixed-priority algorithms for independent, periodic tasks with deadlines equal to periods.

Schedulability: Guaranteed if CPU utilization ≤ n(2^(1/n) - 1). For large n: ≈ 69.3%. (This is a sufficient condition, not necessary.)

Earliest Deadline First (EDF)

Dynamic priority: The task with the closest deadline gets the highest priority.

Optimal among all algorithms for single-processor scheduling of independent tasks. Schedulable iff utilization ≤ 100%.

Disadvantage: Higher runtime overhead (priority changes dynamically). Domino effect under overload (many deadlines missed simultaneously).

Linux Schedulers

Completely Fair Scheduler (CFS)

The default Linux scheduler since 2.6.23 (2007).

Key idea: Track virtual runtime (vruntime) — CPU time weighted by priority. Always run the process with the smallest vruntime.

Implementation: Red-black tree keyed by vruntime. Leftmost node (smallest vruntime) is the next to run. O(log n) insert/remove.

Nice values (-20 to +19): Higher nice = lower priority = faster vruntime accumulation (gets less CPU).

Sleeper fairness: When a process wakes from sleep, its vruntime is set to slightly less than the minimum in the tree (gets a small boost but doesn't dominate).

BFS (Brain Fuck Scheduler)

Con Kolivas' alternative. Single global run queue (vs CFS's per-CPU queues). Simpler, designed for desktop responsiveness. Uses the Earliest Eligible Virtual Deadline First (EEVDF) approach.

EEVDF (Earliest Eligible Virtual Deadline First)

Replaced CFS as the Linux default in kernel 6.6 (2023). Each task has a virtual deadline based on its weight and accumulated CPU time. The task with the earliest eligible virtual deadline runs next. Better latency behavior than CFS.

O(1) Scheduler

Linux 2.6.0-2.6.22. Two arrays per priority level (active and expired). O(1) operations. Replaced by CFS for better fairness and interactivity.

Multiprocessor Scheduling

Load Balancing

Distribute processes evenly across CPUs.

Push migration: Periodic check — move processes from busy CPUs to idle ones. Pull migration: Idle CPU steals work from busy CPUs (work stealing).

Cache affinity: Try to keep a process on the same CPU (its data is cached there). Balance fairness with locality.

Per-CPU Run Queues

Each CPU has its own run queue. Reduces lock contention. Periodic load balancing between queues.

Work stealing: An idle CPU scans other CPUs' queues and steals a process. Used in Go's goroutine scheduler, Tokio, Rayon.

Gang Scheduling

Schedule related threads together on different CPUs simultaneously. Important for tightly coupled parallel programs.

NUMA-Aware Scheduling

Schedule processes near their memory allocation. Moving a thread to a different NUMA node increases memory latency.

Linux: The scheduler considers NUMA topology. Tasks are preferentially scheduled on CPUs in the same NUMA node as their memory.

Scheduling Metrics Comparison

| Algorithm | Response Time | Throughput | Starvation | Preemptive |

|---|---|---|---|---|

| FCFS | Poor (convoy) | Moderate | No | No |

| SJF | Optimal (avg wait) | Good | Yes (long jobs) | No |

| SRTF | Optimal (preemptive) | Good | Yes | Yes |

| RR | Good (bounded) | Moderate (switch overhead) | No | Yes |

| MLFQ | Good (adaptive) | Good | Possible (needs boost) | Yes |

| Priority | Depends | Depends | Yes (without aging) | Optional |

| EDF | Good | Optimal (up to 100%) | Under overload | Yes |

| CFS/EEVDF | Fair | Good | No | Yes |

Applications in CS

- Interactive systems: Low response time is critical. MLFQ/CFS prioritize interactive processes.

- Servers: High throughput. Thread pools with work stealing. NUMA-aware scheduling.

- Real-time systems: EDF or RM for guaranteed deadlines. Used in automotive, avionics, robotics.

- Cloud computing: Fair sharing between tenants. CPU limits via cgroups. Overcommitment (more VMs than CPUs).

- Databases: Query scheduling. Workload management (OLTP vs OLAP priority).

- Embedded: Simple schedulers (round-robin, priority-based). FreeRTOS uses configurable priority scheduling.